Serverless functions are event-driven, stateless compute units that execute in cloud-managed environments without requiring dedicated server provisioning. They improve web speed by eliminating server idle time, reducing Time to First Byte (TTFB), and scaling execution capacity to zero when demand drops.

Modern web architectures adopt serverless models to remove infrastructure overhead and deliver faster response cycles at lower operational cost.

What Are Serverless Functions?

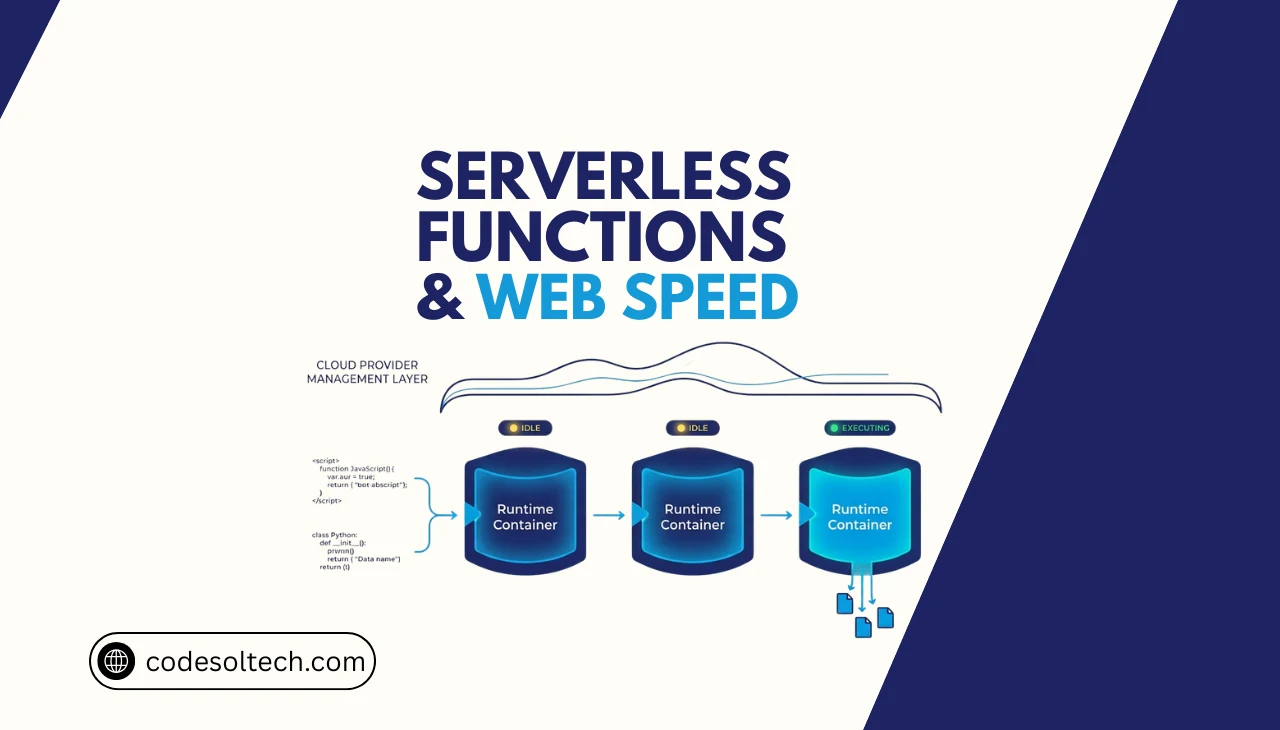

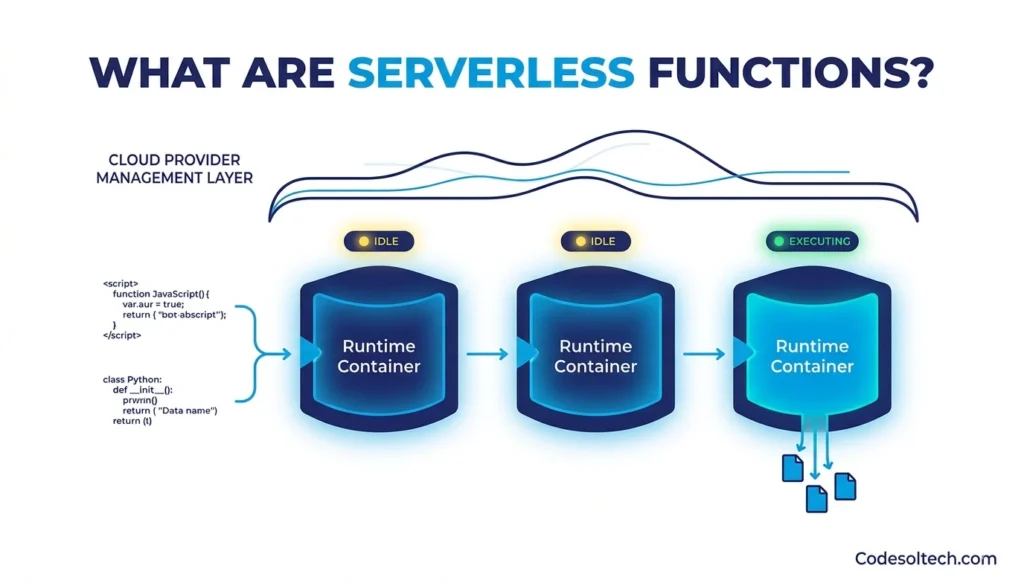

A serverless function is a discrete unit of application logic that a cloud provider executes on-demand in an isolated runtime container. The cloud provider allocates compute resources at invocation time and deallocates them after execution completes.

The developer deploys only the function code; the provider manages OS patching, load balancing, and scaling automatically.

Serverless functions operate on 3 core execution models:

- HTTP-triggered functions — invoked by a direct API or browser request

- Event-triggered functions — invoked by queue messages, database writes, or file uploads

- Scheduled functions — invoked at fixed time intervals via cron expressions

AWS Lambda, Google Cloud Functions, and Cloudflare Workers are the 3 dominant serverless platforms by global market adoption. AWS Lambda processes over 1 trillion function invocations per month across its infrastructure, according to Amazon’s published platform metrics.

How Do Serverless Functions Improve Web Speed?

Serverless functions improve web speed through 4 measurable mechanisms: on-demand scaling, geographic distribution, reduced network hops, and elimination of server warm-up cycles in stateless architectures.

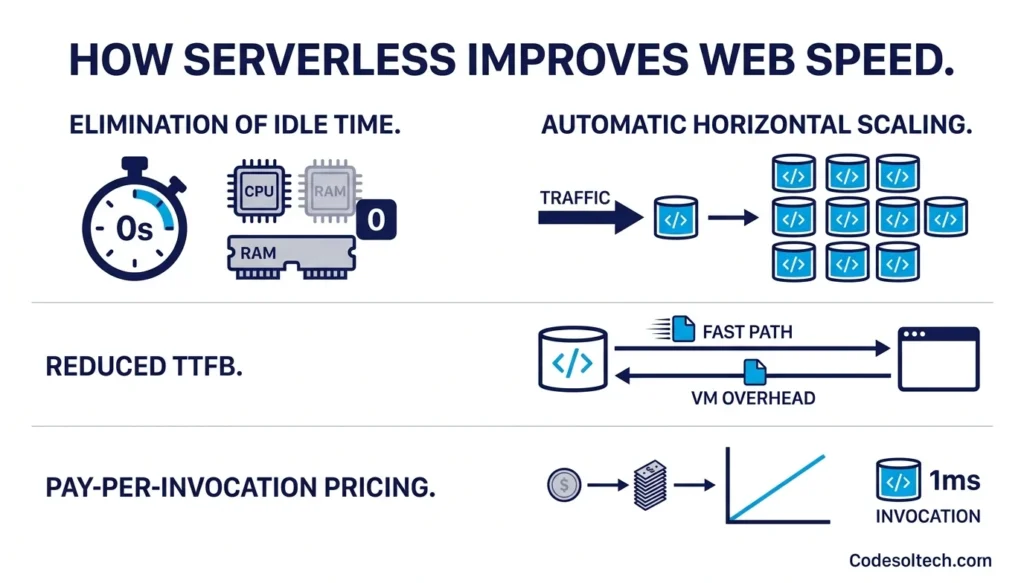

1. Elimination of Server Idle Time

Traditional web servers consume compute resources continuously, even when processing zero requests. A serverless function consumes zero CPU and zero memory between invocations. This resource liberation removes the bottleneck caused by overloaded servers handling concurrent connections beyond their allocated thread pool.

2. Automatic Horizontal Scaling

AWS Lambda scales from 1 concurrent execution to 1,000 concurrent executions in under 60 seconds by default. Each invocation runs in a fully isolated execution context. This concurrency model prevents request queuing — the primary cause of web latency spikes under high traffic load.

3. Reduced TTFB via Lean Execution Contexts

A Node.js Lambda function with a 128 MB memory allocation achieves a median execution duration of 3–8 milliseconds for simple JSON API responses. This contrasts with a VM-based Node.js server, which introduces 15–40 ms of overhead from OS scheduling and network stack initialization. Lower TTFB directly improves Core Web Vitals scores, specifically Largest Contentful Paint (LCP).

4. Pay-per-Invocation Pricing Model

AWS Lambda bills in 1-millisecond increments based on actual execution duration and allocated memory. A function using 128 MB for 100 ms costs $0.0000000021 per invocation. This pricing model incentivizes writing lean, fast functions — directly aligning financial cost with execution speed.

What Is Edge Computing and Why Does It Matter for Web Speed?

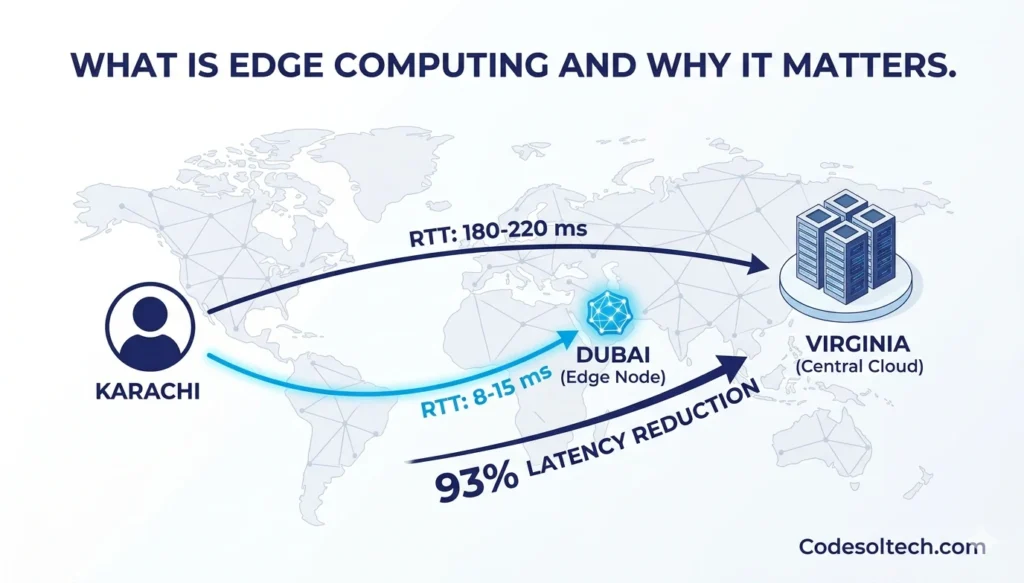

Edge computing is the practice of executing application logic at network nodes geographically close to the end user, rather than in a centralized cloud data center. Edge nodes reduce the physical distance that data travels, which directly cuts network round-trip time (RTT) and latency.

A user in Karachi requesting data from a server in Virginia experiences a baseline RTT of approximately 180–220 ms. The same request served from an edge node in Dubai reduces RTT to 8–15 ms. This 93% latency reduction is the primary performance advantage of edge computing over centralized serverless architectures.

Edge Functions vs. Serverless Functions: 4 Key Differences

| Property | Serverless Functions (e.g., AWS Lambda) | Edge Functions (e.g., Cloudflare Workers) |

|---|---|---|

| Execution Location | Regional cloud data center | CDN edge node (<50 ms from user) |

| Cold Start Latency | 100–500 ms (first invocation) | 0–5 ms (V8 isolate model) |

| Runtime Environment | Full OS container (Node.js, Python, Java) | JavaScript V8 isolate (limited APIs) |

| Max Execution Duration | Up to 15 minutes (AWS Lambda) | Up to 30 seconds (Cloudflare Workers) |

Edge functions execute within V8 isolates — lightweight JavaScript runtimes that start in under 5 ms — eliminating the cold start penalty that affects traditional serverless containers. Cloud architecture decisions between edge and regional serverless depend on use-case latency requirements and runtime API surface needs.

AWS Lambda: Architecture, Performance, and Web Speed Impact

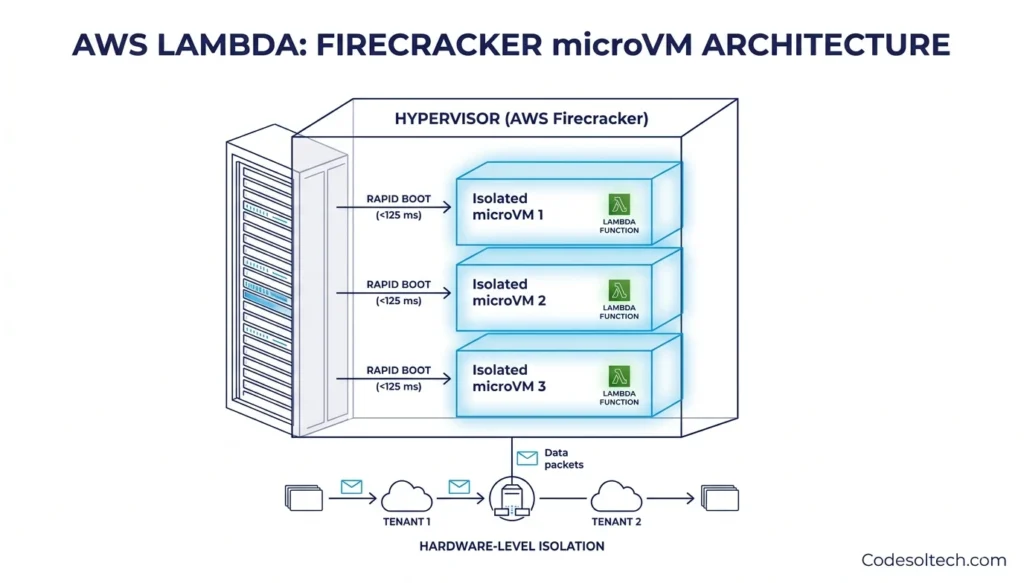

AWS Lambda is Amazon Web Services’ Function-as-a-Service (FaaS) platform, launched in 2014. Lambda executes functions inside Firecracker microVMs — a virtualization technology developed by AWS that boots a secure micro virtual machine in under 125 milliseconds. Each microVM provides hardware-level isolation between tenant functions without the overhead of a full hypervisor.

AWS Lambda Cold Start: The Primary Latency Problem

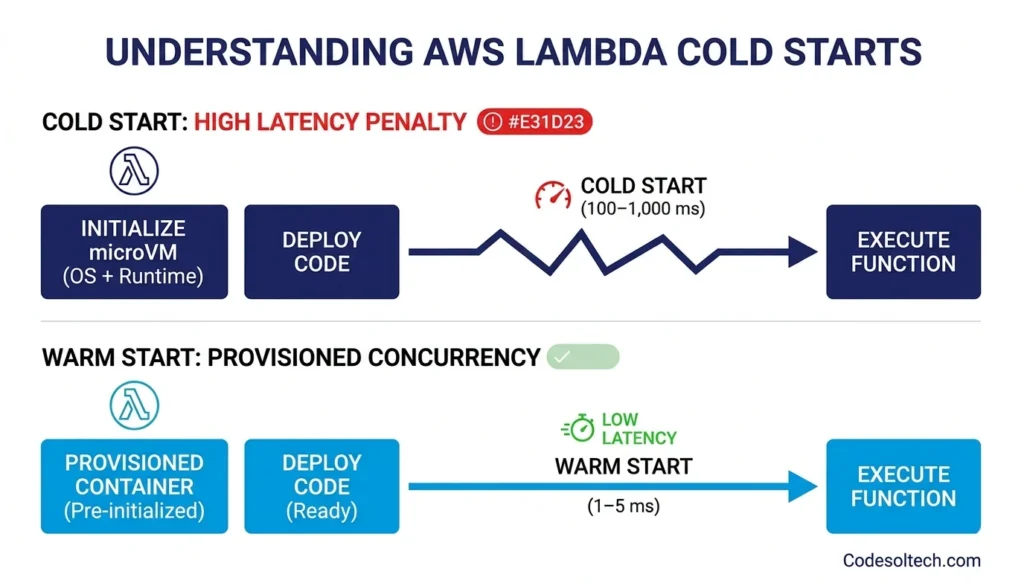

A cold start occurs when Lambda initializes a new execution environment for a function with no pre-warmed container available. Cold start duration ranges from 100 ms to 1,000 ms, depending on runtime, memory allocation, and deployment package size.

Java and .NET runtimes exhibit cold starts of 500–1,000 ms due to JVM and CLR initialization overhead. Node.js and Python runtimes exhibit cold starts of 100–300 ms.

AWS provides 3 native mechanisms to mitigate cold starts:

- Provisioned Concurrency — pre-warms a defined number of execution environments, reducing cold start to under 1 ms

- Lambda SnapStart — snapshots the initialized runtime state for Java 21 functions, cutting cold start by up to 90%

- Lambda URL with function warm-up pings — scheduled EventBridge rules invoke the function every 5 minutes to keep the container warm

Lambda Memory Allocation and Execution Speed

Lambda allocates CPU proportionally to memory. A 128 MB function receives 0.0625 vCPUs of compute capacity. A 1,769 MB function receives exactly 1 full vCPU. Allocating 1,769 MB to a CPU-intensive function reduces execution time by up to 6x compared to the 128 MB baseline, often resulting in lower total cost despite higher per-ms billing.

Node.js API services built on Lambda perform optimally at 512 MB–1,024 MB memory allocations for most workloads.

Edge Computing Platforms and Web Speed Benchmarks

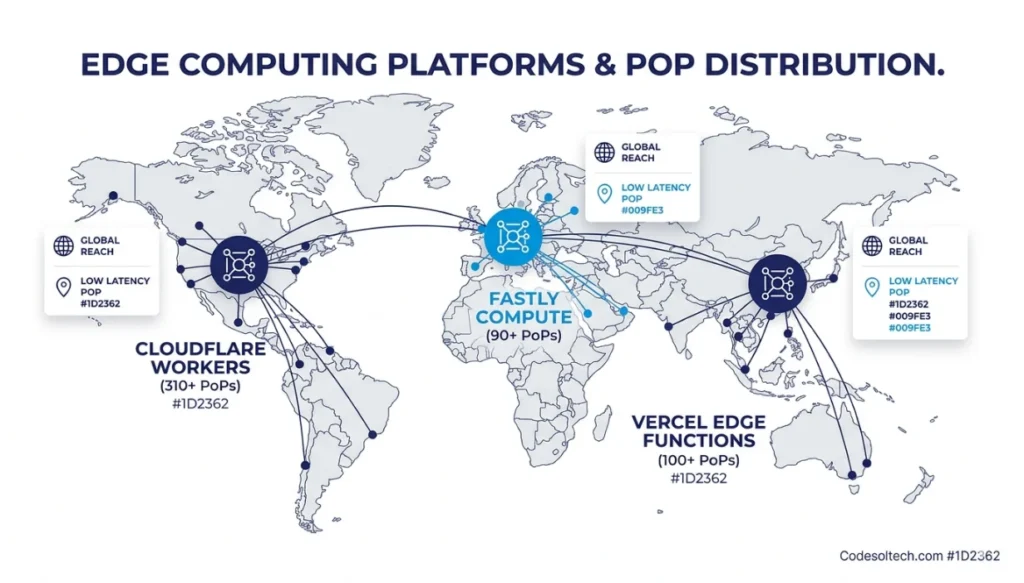

Edge computing platforms distribute serverless execution across globally distributed Points of Presence (PoPs). The 3 dominant edge platforms by PoP count are Cloudflare Workers (310+ PoPs), Fastly Compute (90+ PoPs), and Vercel Edge Functions (100+ PoPs). Each platform executes JavaScript or WebAssembly at the network edge, co-located with CDN cache nodes.

How Edge Computing Integrates with AWS Lambda

AWS Lambda@Edge is a service that deploys Lambda functions to Amazon CloudFront’s 450+ edge locations globally. Lambda@Edge executes on 4 CloudFront event triggers: viewer request, viewer response, origin request, and origin response. This allows API logic and content personalization to execute within 5–10 ms of the end user, bypassing the origin server entirely for cacheable and compute-light operations.

AWS Lambda@Edge imposes specific constraints compared to standard Lambda:

- Maximum deployment package size: 50 MB (vs. 250 MB for standard Lambda)

- Maximum execution duration: 5 seconds for viewer-facing events (vs. 15 minutes for standard Lambda)

- Supported runtimes: Node.js only (vs. 12 runtimes for standard Lambda)

- No VPC access: Lambda@Edge functions cannot connect to private VPC resources

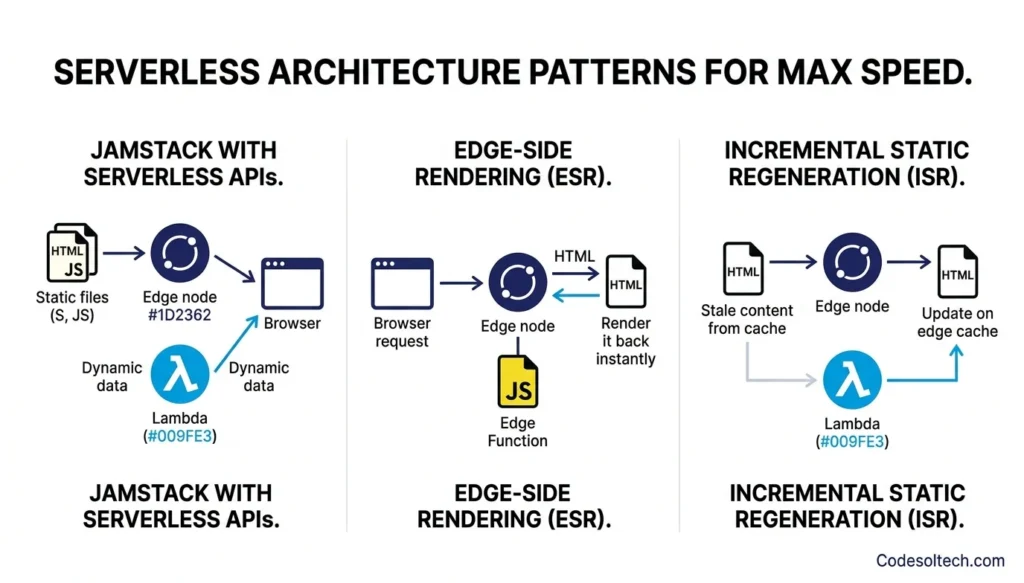

Serverless Architecture Patterns That Maximize Web Speed

Web architects apply 5 proven patterns to extract maximum speed from serverless and edge computing deployments.

- JAMstack with serverless APIs — static assets served from CDN edge, dynamic data fetched from Lambda endpoints; eliminates origin server TTFB for all static content

- Edge-side rendering (ESR) — HTML rendered at the edge node using edge functions, combining the SEO benefits of server-side rendering with sub-10 ms delivery latency

- Incremental Static Regeneration (ISR) — stale content served instantly from edge cache while a Lambda function regenerates updated HTML in the background

- API Gateway + Lambda proxy integration — HTTP API Gateway routes requests to Lambda with 1–3 ms routing overhead, supporting 10,000 requests per second per region without configuration

- DynamoDB + Lambda single-table design — co-locating Lambda and DynamoDB in the same AWS region reduces inter-service latency to under 1 ms via AWS private backbone networking

These patterns align with modern web architecture principles that prioritize Core Web Vitals compliance, specifically targeting LCP under 2.5 seconds and TTFB under 800 ms as defined by Google’s PageSpeed Insights thresholds.

Serverless Limitations That Directly Affect Web Speed

Serverless architectures introduce 4 performance constraints that developers must engineer around to maintain target web speed metrics.

- Cold start latency — new execution environment initialization adds 100–1,000 ms to the first request after a period of inactivity; Provisioned Concurrency eliminates this at additional cost

- Maximum execution duration — AWS Lambda enforces a 15-minute maximum execution timeout; long-running web scraping or video processing tasks require Step Functions orchestration instead

- Network egress costs — Lambda functions transferring large payloads (images, binary files) across AWS regions incur egress fees of $0.09 per GB; pre-signed S3 URLs bypass Lambda for large asset delivery

- Connection pool limitations — each Lambda invocation opens a new database connection; RDS Proxy or PlanetScale’s serverless driver eliminates connection pool exhaustion for relational database workloads

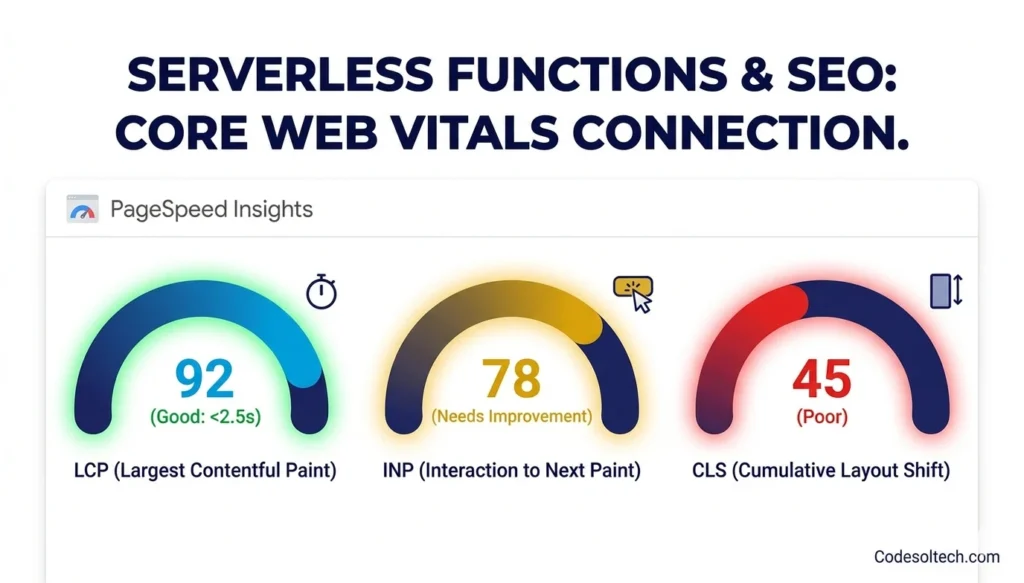

Serverless Functions and SEO: The Core Web Vitals Connection

Google’s Core Web Vitals algorithm uses 3 performance signals as ranking factors: Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS). Serverless functions directly influence LCP and INP by reducing TTFB the dominant contributor to LCP in server-rendered applications.

A serverless architecture delivering TTFB under 200 ms enables LCP scores below 2.5 seconds for 95% of users on 4G connections. Google classifies LCP under 2.5 seconds as “Good” — the threshold that eliminates Core Web Vitals as a negative ranking signal.

Optimizing Core Web Vitals through infrastructure decisions produces compounding SEO benefits beyond direct ranking improvements, including reduced bounce rates and higher session engagement.

Edge computing amplifies this SEO impact by delivering pre-rendered HTML from nodes within 10–20 ms of the user’s geographic location. Edge-side rendering (ESR) achieves both the crawlability of server-side rendering and the speed of static delivery — a combination that maximizes Googlebot crawl efficiency and user-facing performance simultaneously.

Final Words

Serverless functions and edge computing are not trends — they are the current standard for high-performance web architecture. AWS Lambda eliminates idle server cost and scales to thousands of concurrent executions in seconds. Edge computing cuts network RTT by up to 93% by executing logic metres from the user.

Together, they deliver measurable TTFB reductions, stronger Core Web Vitals scores, and lower infrastructure cost per request.

Ready to Build a Faster Web Architecture?

Our engineers design and deploy AWS Lambda and Edge Computing solutions that cut your TTFB, pass Core Web Vitals, and scale without limits.