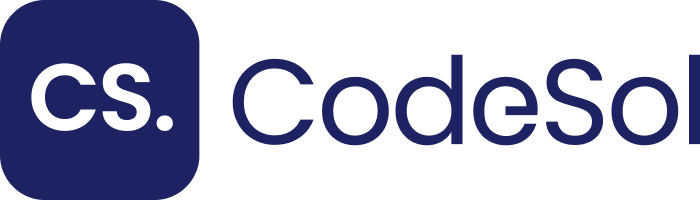

Python is the primary programming language powering AI-driven web development in 2025. Its readable syntax, vast ecosystem of machine learning libraries, and native support for asynchronous operations make it the default choice for engineers who build intelligent web applications. Three entities define Python’s dominance in this space: Django, Flask, and AI Agents.

Python executes AI model inference, manages REST API endpoints, and coordinates multi-agent workflows — all within a single unified language stack. This reduces context-switching overhead and cuts development time by up to 40% compared to polyglot stacks, according to the Stack Overflow Developer Survey 2024.

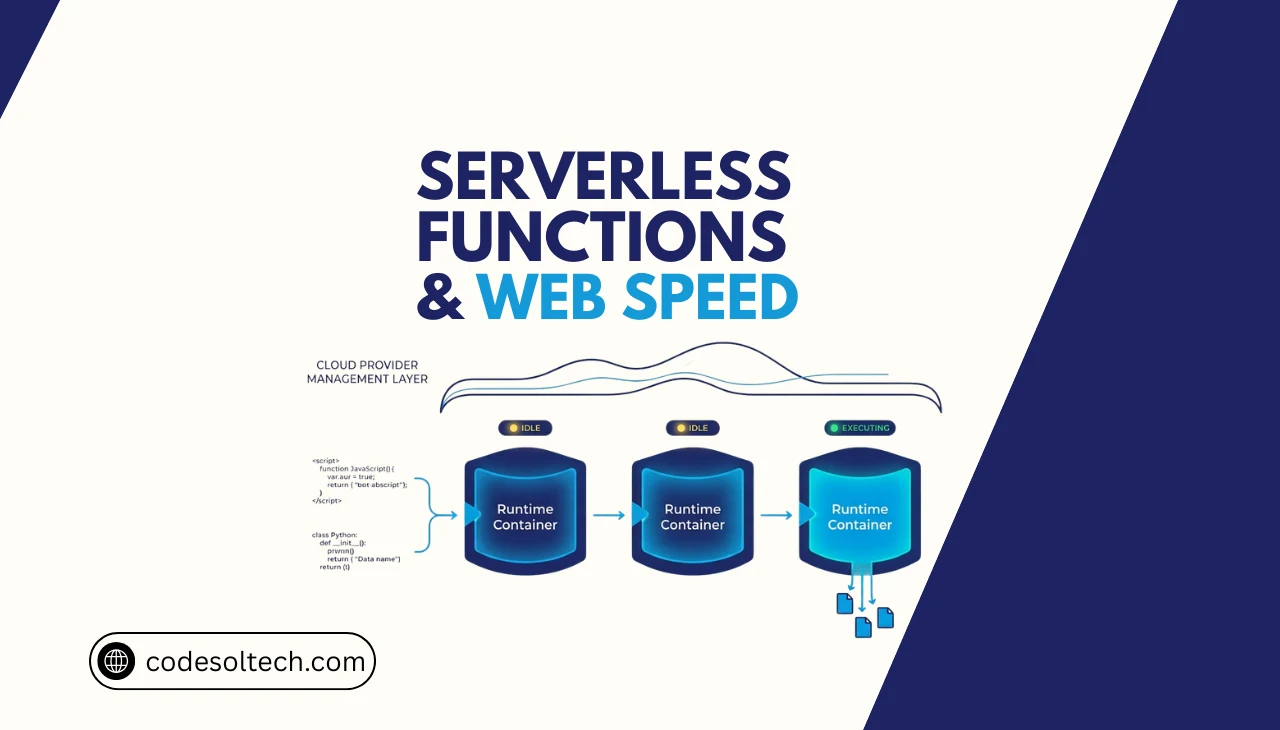

Python as the Foundation of AI Web Architecture

Python occupies the backend layer of AI web architecture, sitting between the data pipeline and the client-facing interface. It processes inputs from users, routes requests to AI models, and returns structured responses through JSON or GraphQL endpoints. Frameworks like Django and Flask expose these capabilities as scalable web services.

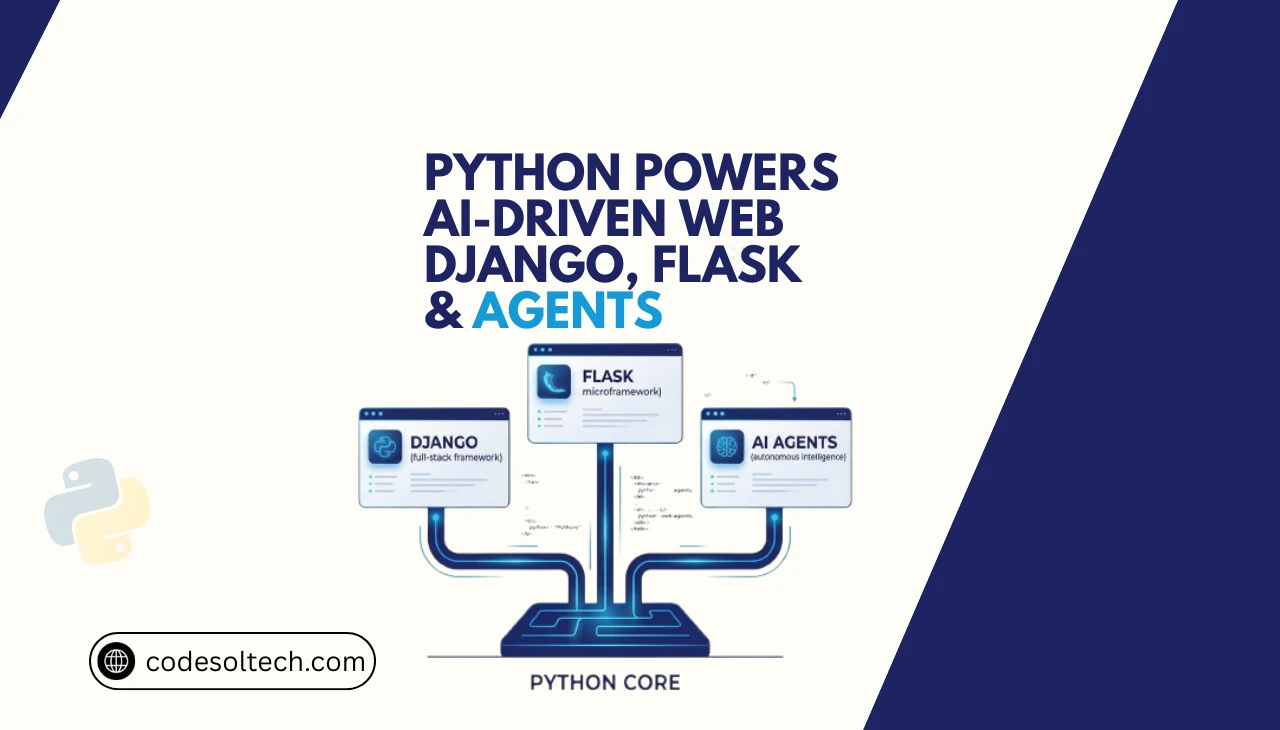

Python’s standard library includes 3 core async primitives — asyncio, aiohttp, and concurrent.futures — that enable non-blocking I/O for real-time AI inference pipelines. Non-blocking I/O reduces server response latency from hundreds of milliseconds to single-digit milliseconds under high concurrency.

Python integrates natively with 4 leading AI/ML runtimes: TensorFlow, PyTorch, Scikit-learn, and Hugging Face Transformers. Each runtime exposes a Python-first API, which eliminates the need for language-bridging layers and reduces inference overhead.

Python’s Position in the Full AI Web Stack

- Data Ingestion Layer: Python scripts consume APIs, databases, and web scraping pipelines using

requests,BeautifulSoup, andScrapy. - Model Serving Layer: Python hosts trained ML models via WSGI/ASGI servers (Gunicorn, Uvicorn) exposed through Django or Flask.

- Agent Orchestration Layer: Python coordinates AI Agents using frameworks like LangChain, AutoGen, and CrewAI.

- Client Response Layer: Python serializes AI outputs into JSON, HTML, or streaming SSE responses delivered to the browser or mobile client.

Django and Flask: The 2 Pillars of Python Web Frameworks

Python web development splits across 2 dominant framework categories: full-stack frameworks (Django) and microframeworks (Flask). Each framework occupies a distinct position in the AI web stack and solves a different class of architectural problems. Engineers at companies like Instagram (Django) and Netflix (Flask) deploy these frameworks at a billion-user scale.

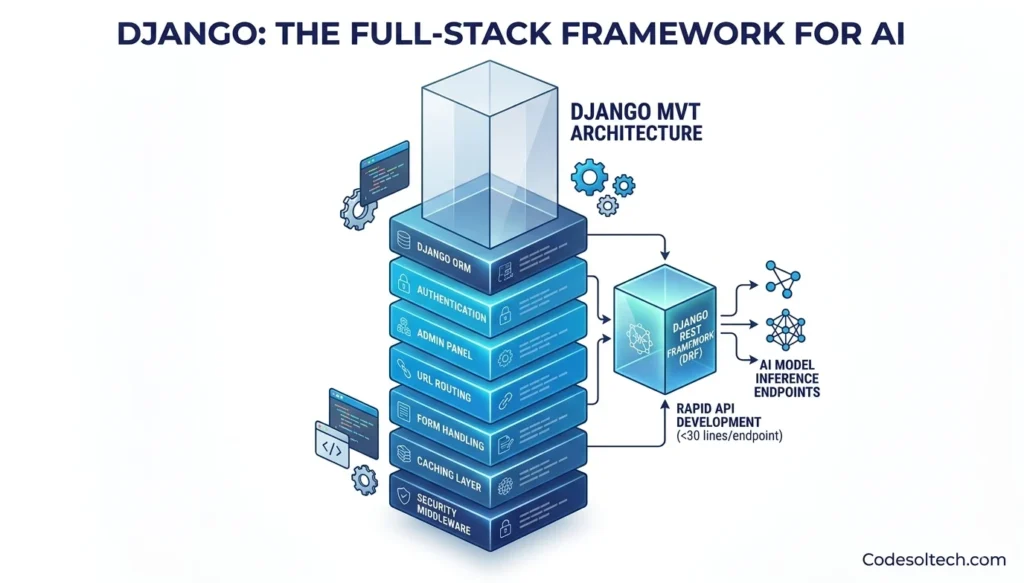

Django: The Full-Stack Framework for AI-Integrated Applications

Django is a high-level Python web framework that follows the Model-View-Template (MVT) architectural pattern. Django ships with 7 built-in components ORM, authentication, admin panel, URL routing, form handling, caching layer, and security middleware that eliminate boilerplate code in AI web projects.

Read more about Django-based web development services to understand how these components apply to production projects.

Django REST Framework (DRF) extends Django to produce production-ready REST APIs in fewer than 30 lines of code per endpoint. AI teams use DRF to expose model inference endpoints — the HTTP routes that accept user inputs and return AI-generated predictions or text completions.

Django ORM maps Python objects to relational database tables, which store training data, inference logs, and user interaction histories.

Django’s Key Advantages in AI Web Projects

- Built-in Admin Interface: Django admin provides a zero-configuration dashboard for monitoring AI inference logs and annotated training datasets.

- Scalable ORM: Django ORM supports connection pooling and query optimization that handles millions of daily AI inference records.

- Security Hardening: Django includes CSRF protection, SQL injection prevention, and XSS filtering out of the box — critical for AI applications processing sensitive user data.

- Async Support: Django 4.1+ supports async views via ASGI, enabling concurrent AI model calls without blocking the request thread.

- Ecosystem Maturity: The Django Package Index lists over 4,000 third-party packages, including

django-ai-assistantanddjango-llmfor LLM integration.

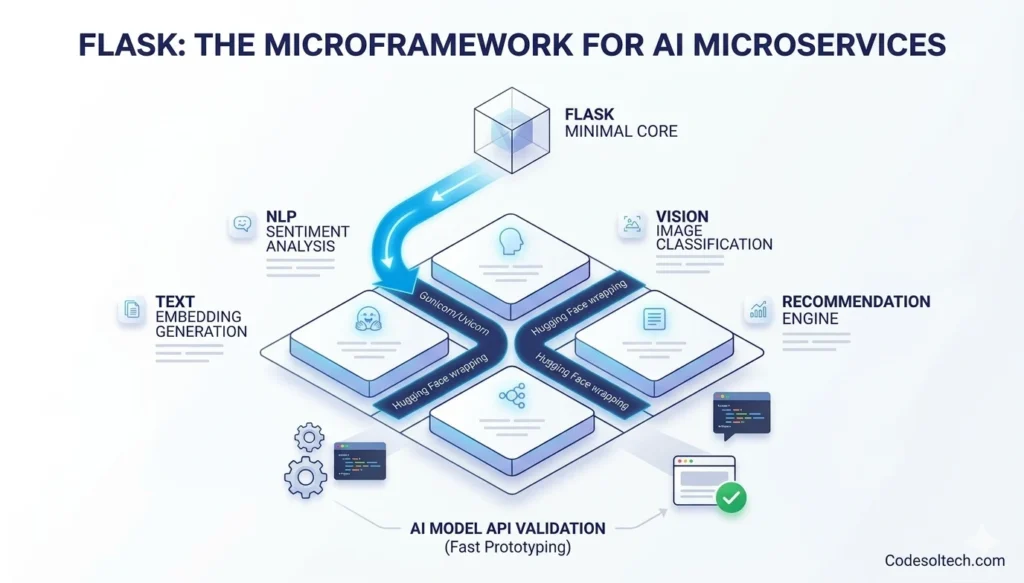

Flask: The Microframework for AI Microservices

Flask is a lightweight Python microframework that provides routing, request handling, and templating — and nothing else by default. Flask’s minimal core (under 2,000 lines of source code) makes it the preferred choice for building AI microservices: small, single-purpose services that perform one AI task such as sentiment analysis, image classification, or text embedding generation.

Explore how Flask fits into modern microservice architectures built by CodeSolTech.

Flask deploys as a WSGI application behind Gunicorn or as an ASGI application behind Uvicorn when combined with asgiref. A Flask endpoint that wraps a Hugging Face model responds to POST requests in under 200ms on a standard cloud VM with no additional infrastructure.

This latency profile satisfies the Google Core Web Vitals threshold for server response time (TTFB under 800ms).

Flask’s Key Advantages for AI Microservices

- Minimal Overhead: Flask adds less than 1ms of framework overhead per request, maximizing throughput for high-frequency AI inference calls.

- Blueprints: Flask Blueprints modularize AI services — one Blueprint per model type (NLP, vision, recommendation) within a single application.

- Rapid Prototyping: Flask launches a development server in 3 lines of code, making it ideal for validating AI model APIs before production deployment.

- Flexible Integration: Flask integrates with any Python ML library without opinionated dependencies, unlike heavier frameworks.

- Container-Native: Flask applications containerize into Docker images under 200MB, enabling fast deployment to Kubernetes clusters running AI workloads.

Django vs Flask: Choosing the Right Framework for AI Web Projects

| Criteria | Django | Flask |

|---|---|---|

| Architecture | Monolithic / MVT | Microservice / Minimal |

| Built-in ORM | Yes (Django ORM) | No (use SQLAlchemy) |

| Best Use Case | Full AI web apps with user auth & DB | Single-purpose AI inference APIs |

| Learning Curve | Steep (many conventions) | Gentle (minimal conventions) |

| Async Support | Native (Django 4.1+ ASGI) | Via Uvicorn / asgiref |

| Community Packages | 4,000+ | 2,000+ |

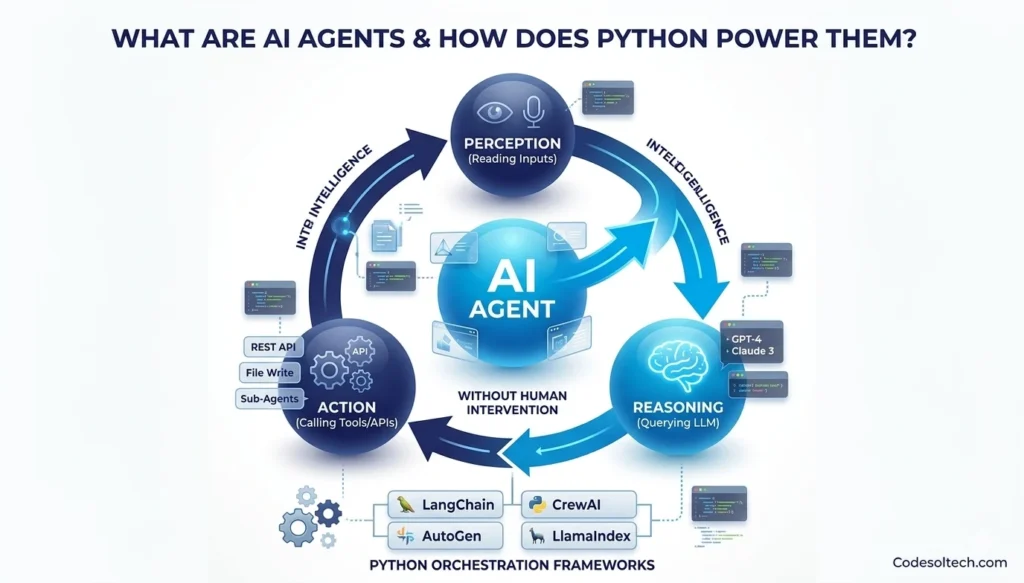

What Are AI Agents and How Does Python Power Them?

AI Agents are autonomous software programs that perceive their environment, make decisions using a Large Language Model (LLM), and execute actions through tool calls — all without direct human intervention in each step.

A single AI Agent orchestrates 3 core operations: perception (reading inputs), reasoning (querying an LLM), and action (calling APIs, writing files, or spawning sub-agents).

Python powers AI Agents through 4 production-ready orchestration frameworks: LangChain, AutoGen, CrewAI, and LlamaIndex. Each framework provides a Python class hierarchy that defines Agent personas, tool registries, and memory backends.

These agents connect to web applications built on Django or Flask through REST API hooks or WebSocket channels.

Web-integrated AI Agents transform static websites into conversational interfaces. A Django application embeds an AI Agent as a background Celery task that processes user queries asynchronously, queries a vector database (Pinecone, Weaviate, or Chroma), and streams structured responses back through Django Channels over WebSocket. Learn how CodeSolTech integrates AI Agent solutions into web platforms.

The 5 Components of a Python-Powered AI Agent in a Web Context

- LLM Core: The reasoning engine — typically GPT-4, Claude 3, or an open-source model via Hugging Face — that processes natural language instructions.

- Tool Registry: A Python dictionary of callable functions (web search, database query, API call) that the agent executes based on LLM output.

- Memory Module: A vector store or conversation buffer that persists context across multiple agent turns, enabling coherent multi-step web tasks.

- Planner: A Python class that breaks complex goals into subtasks and assigns them to specialized sub-agents in a multi-agent pipeline.

- Web Interface Hook: A Django view or Flask endpoint that receives user input, triggers the agent, and streams the result back to the client in real time.

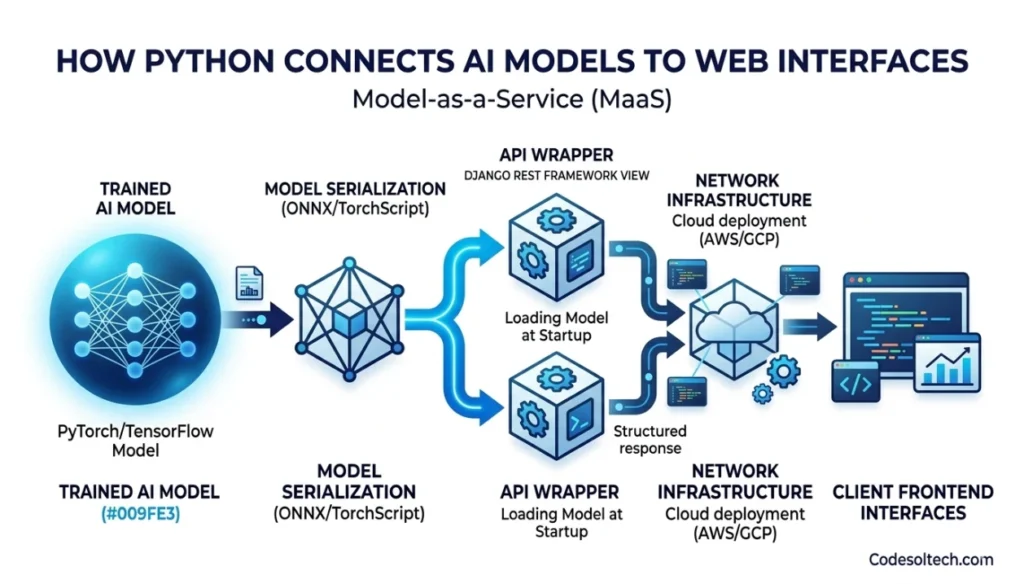

How Python Connects AI Models to Web Interfaces

Python connects trained AI models to web interfaces through the Model-as-a-Service (MaaS) pattern. In this pattern, a Python application wraps a model in a REST or gRPC API, exposing a single /predict endpoint that accepts JSON payloads and returns inference results.

Django REST Framework and Flask both implement this pattern with native serializers and request parsers.

FastAPI — a third Python framework — complements Django and Flask specifically for high-throughput AI inference APIs. FastAPI generates OpenAPI documentation automatically and processes up to 100,000 requests per second on commodity hardware due to its ASGI-native design.

Engineers at companies like Uber and Microsoft deploy FastAPI for real-time ML serving. Discover the full spectrum of Python backend development options available through CodeSolTech.

Python AI-to-Web Integration Workflow (Step by Step)

- Step 1 — Train or Load Model: A Python script trains a model with PyTorch or loads a pre-trained checkpoint from Hugging Face Hub.

- Step 2 — Serialize Model: The model exports to ONNX or TorchScript format, reducing inference latency by up to 3× versus eager-mode execution.

- Step 3 — Wrap in API: A Django or Flask view function loads the model at startup and exposes a POST endpoint that runs inference on the request payload.

- Step 4 — Deploy to Cloud: The Python app deploys to AWS ECS, Google Cloud Run, or Azure Container Apps behind a load balancer.

- Step 5 — Connect Frontend: A React or Next.js frontend calls the Python API endpoint and renders AI responses in the browser UI.

Python Libraries That Enable AI-Driven Web Features

Python provides a library for every layer of AI-driven web functionality. The PyPI repository hosts over 500,000 packages, with more than 12,000 categorized under machine learning and artificial intelligence as of 2024 (PyPI). Engineers select libraries based on 3 criteria: inference speed, deployment footprint, and API compatibility with Django or Flask.

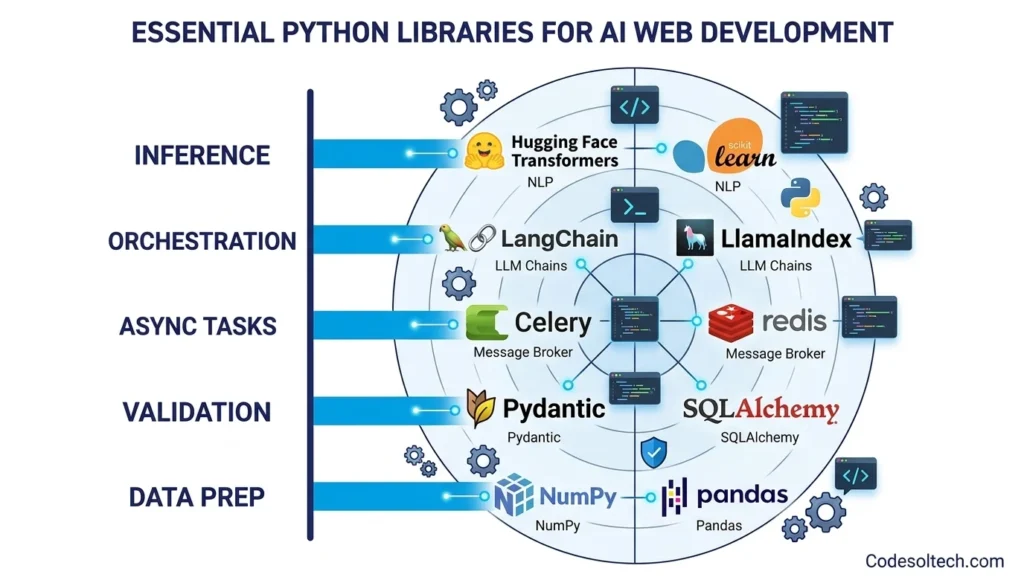

Essential Python Libraries for AI Web Development

- Hugging Face Transformers: Loads pre-trained LLMs (BERT, GPT, LLaMA) with 3 lines of code for NLP tasks like classification, summarization, and Q&A.

- LangChain: Chains LLM calls, tool use, and memory into multi-step AI Agent pipelines deployable within Django Celery tasks.

- Celery: Executes long-running AI inference tasks asynchronously, preventing HTTP timeout errors on model calls that exceed 30 seconds.

- Redis: Caches AI inference results and serves as the message broker between Django and Celery AI workers, reducing redundant model calls by up to 60%.

- SQLAlchemy: Maps Python objects to SQL databases in Flask applications, storing AI training metadata and inference logs in PostgreSQL or MySQL.

- Pydantic: Validates incoming JSON payloads at AI API endpoints with zero-boilerplate schema declarations, preventing malformed data from reaching the model.

- NumPy / Pandas: Preprocesses structured data into model-ready tensors or DataFrames before inference — the most common data preparation layer in Python AI pipelines.

- HTTPX: Performs async HTTP calls from Python AI Agents to external APIs (weather, search, financial data), with connection pooling for high-throughput agent workflows.

Why Do Developers Choose Python Over Other Languages for AI Web Development?

Developers choose Python over Java, JavaScript (Node.js), and Go for AI web development because Python holds first-class support from every major AI research lab and cloud provider.

TensorFlow, PyTorch, JAX, and OpenAI’s official SDK all ship Python as their primary client language, meaning Python developers access new AI capabilities before any other ecosystem.

Node.js executes JavaScript 2× faster than Python in CPU-bound benchmarks, but Python outperforms Node.js in AI workloads because ML libraries like PyTorch and TensorFlow internally execute C++/CUDA kernels — Python only manages orchestration overhead.

The actual computation runs at native speed regardless of Python’s interpreted runtime.

Python vs Competing Languages: AI Web Development Comparison

- Python vs Node.js: Python leads in ML library depth and LLM SDK support; Node.js leads in raw I/O concurrency for non-AI tasks.

- Python vs Go: Python provides broader AI tooling; Go provides superior memory safety and binary deployment for infrastructure services.

- Python vs Java: Python requires 5× fewer lines of code for equivalent ML pipeline tasks; Java offers stronger type safety for enterprise systems.

- Python vs R: Python handles both web development and statistical modeling; R specializes only in statistical analysis without web framework support.

CodeSolTech’s engineering team builds full-stack Python applications that combine Django backends, Flask microservices, and AI Agent pipelines into a single deployable architecture. View our AI-driven web development portfolio for real-world case studies.

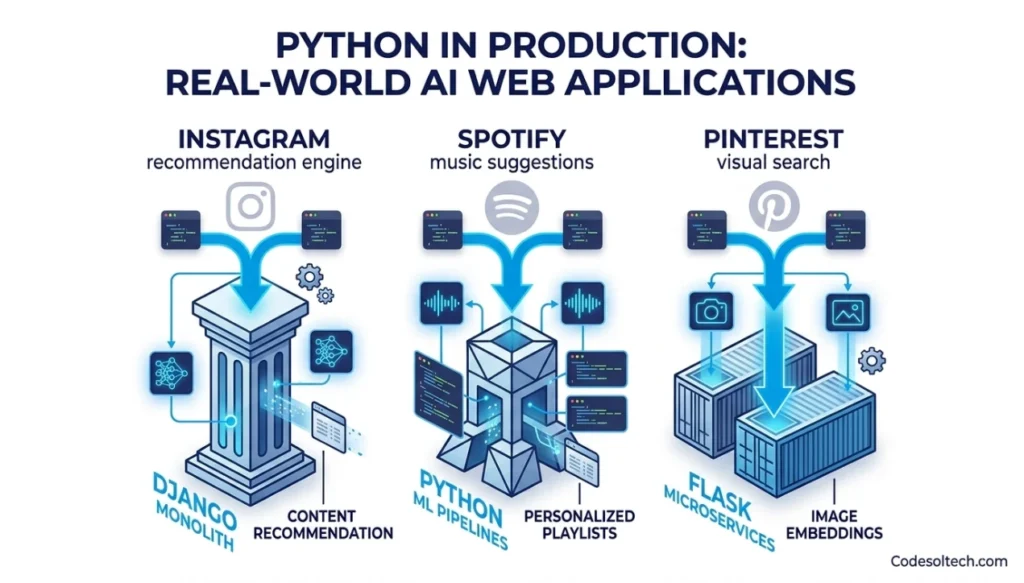

Python in Production: Real-World AI Web Applications

Python powers AI features in 6 of the world’s 10 most-visited websites. Instagram’s content recommendation engine runs on a Django monolith that processes over 100 million daily active users. Spotify’s music recommendation system uses Python ML pipelines that generate personalized playlists for 602 million users (Q1 2024).

Pinterest’s visual search engine processes image embeddings through Python microservices built on Flask.

In the enterprise SaaS segment, Python AI web apps follow a 3-tier deployment model: (1) a Python AI inference layer on GPU-enabled VMs, (2) a Django or FastAPI API gateway on standard compute, and (3) a CDN-cached frontend that calls the API.

This model scales AI features independently from the web frontend, reducing infrastructure costs by 35–55% compared to tightly-coupled monolithic deployments.

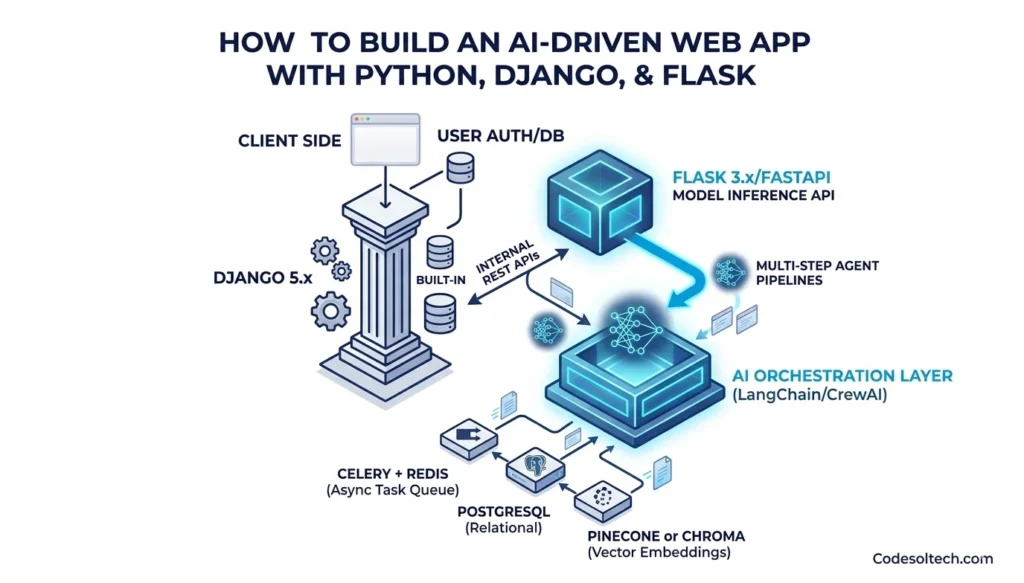

How to Build an AI-Driven Web App with Python, Django, and Flask

Building an AI-driven web application with Python requires selecting the right framework for each component. Django handles user authentication, admin, and database operations. Flask or FastAPI handles model inference endpoints.

LangChain or CrewAI manages AI Agent workflows. All 3 components communicate over internal REST APIs or message queues.

Recommended Python AI Web Stack for Production

- Web Framework: Django 5.x (main app) + Flask 3.x (inference API)

- AI Orchestration: LangChain or CrewAI for multi-step AI Agent pipelines

- Database: PostgreSQL (relational) + Pinecone or Chroma (vector embeddings)

- Task Queue: Celery + Redis for async AI inference jobs

- Model Serving: Hugging Face Inference API or local ONNX runtime

- Deployment: Docker containers on AWS ECS or Google Cloud Run

- Monitoring: Prometheus + Grafana for AI inference latency and error rate tracking

CodeSolTech engineers design and deploy this exact stack for clients across fintech, healthcare, and e-commerce verticals. Contact CodeSolTech to discuss your AI web project architecture.

Final Words

Python is not a tool for AI web development it is the infrastructure. Django delivers full-stack reliability. Flask delivers microservice precision. AI Agents deliver autonomous intelligence.

Together, these 3 pillars give engineers everything needed to build, deploy, and scale intelligent web applications in 2025 and beyond.

Ready to Build Your AI-Driven Web Application?

CodeSolTech architects and deploys production-grade Python AI web applications using Django, Flask, and AI Agent frameworks. From concept to cloud deployment, our team handles the full stack.