Database schema design is the structural blueprint that defines how data is stored, organized, and related within a database management system (DBMS). A schema determines table definitions, column data types, relationships, constraints, and indexing strategies.

Poor schema design produces query bottlenecks, data anomalies, and system failures at scale. Engineers who design schemas with scalability as a first-class concern reduce 60–80% of performance regressions before a single line of application code is written.

What Is Database Schema Design?

Database schema design is the process of defining the logical and physical structure of a database, including entities, attributes, data types, primary keys, foreign keys, and integrity constraints.

A schema exists at 3 distinct levels: the conceptual schema (entity-relationship model), the logical schema (normalized table definitions), and the physical schema (storage engine configuration, index types, and partitioning).

The schema acts as a contract between the application layer and the data storage layer. Changes to a production schema without migration planning cause data corruption, downtime, and transaction rollbacks.

A well-designed schema supports horizontal and vertical scaling without destructive alterations to existing table structures.

Why Does Schema Design Matter for Scalability?

Schema design directly controls query execution plans, I/O cost, and lock contention. A database serving 10,000 queries per second requires a schema where every frequently executed query resolves in a single index scan rather than a full table scan.

The difference between a covering index and a missing index on a 500-million-row table is a 200× gap in query latency.

Scalability in database systems breaks into 2 primary dimensions:

- Vertical scaling (scale-up): Adding CPU cores, RAM, and faster NVMe storage to a single database server. Vertical scaling is bounded by hardware limits and single-server SPOF (single point of failure).

- Horizontal scaling (scale-out): Distributing data across multiple nodes using sharding, replication, or federated databases. Horizontal scaling requires a schema designed for distributed access patterns from the start.

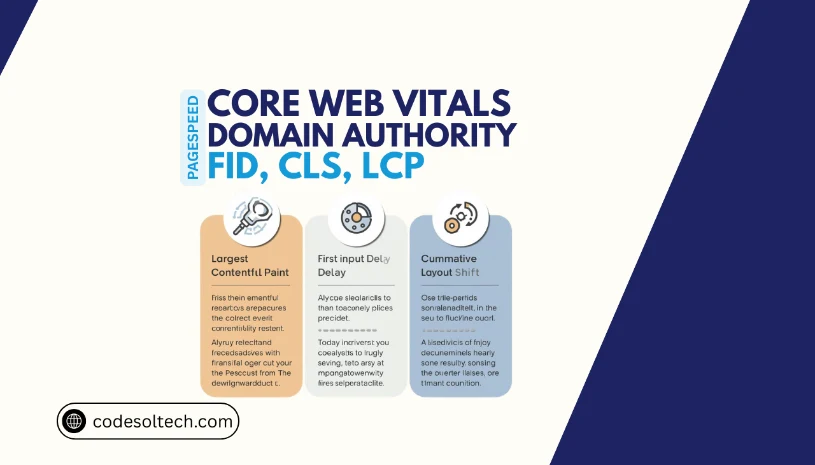

SQL vs NoSQL: Which Schema Model Scales Better?

SQL (Structured Query Language) databases enforce a rigid, predefined schema using relational tables, ACID transactions, and foreign key constraints. NoSQL databases use flexible or schema-less data models — including document, key-value, wide-column, and graph structures — and prioritize horizontal distribution over strict consistency.

The correct choice between SQL and NoSQL is determined by the application’s data model, consistency requirements, and access patterns — not by popularity or trend.

SQL Schema Characteristics

- Uses normalized relational tables linked by foreign keys

- Enforces referential integrity at the database engine level

- Supports complex JOIN operations across multiple tables

- Guarantees ACID properties: Atomicity, Consistency, Isolation, Durability

- Best suited for transactional systems (ERP, banking, e-commerce orders)

- Examples: PostgreSQL, MySQL, MariaDB, Microsoft SQL Server

NoSQL Schema Characteristics

- Uses dynamic or document-based schemas with no predefined column types

- Scales horizontally across commodity hardware clusters

- Trades ACID guarantees for BASE (Basically Available, Soft state, Eventual consistency) semantics

- Eliminates JOIN cost by embedding related data in a single document or row

- Best suited for high-throughput read/write workloads (social feeds, IoT telemetry, real-time analytics)

- Examples: MongoDB, Cassandra, DynamoDB, Redis, Couchbase

What Is Normalization in Database Schema Design

Normalization is the process of organizing relational database tables to eliminate data redundancy and prevent update, insert, and delete anomalies. E.F. Codd introduced normalization theory in 1970, defining a sequence of normal forms (NF) that each impose stricter structural rules on table definitions.

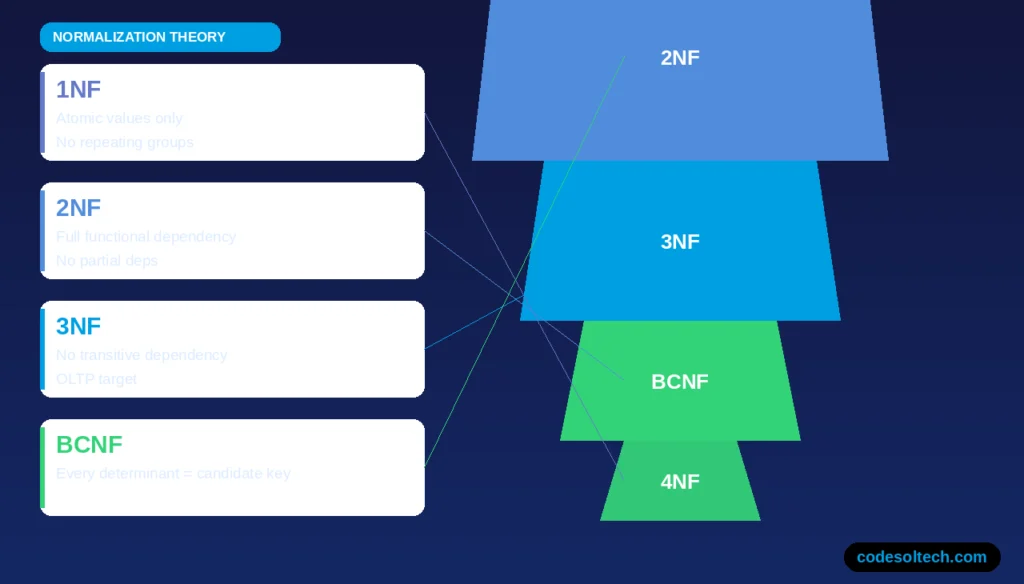

The 5 Primary Normal Forms

- 1NF (First Normal Form): Every column holds atomic (indivisible) values. No repeating groups or arrays are stored in a single cell.

- 2NF (Second Normal Form): The table is in 1NF, and every non-key attribute is fully functionally dependent on the entire primary key. This eliminates partial dependencies in composite-key tables.

- 3NF (Third Normal Form): The table is in 2NF, and no non-key attribute is transitively dependent on the primary key. 3NF is the target for most OLTP (Online Transaction Processing) schemas.

- BCNF (Boyce-Codd Normal Form): A stricter version of 3NF where every determinant is a candidate key. BCNF resolves anomalies that 3NF does not catch in tables with overlapping composite keys.

- 4NF (Fourth Normal Form): The table is in BCNF with no multi-valued dependencies. 4NF applies to schemas that model complex many-to-many-to-many relationships.

When Should You Denormalize a Schema

Denormalization is the deliberate introduction of redundancy into a schema to reduce JOIN cost and improve read throughput. Engineers apply denormalization when profiling reveals that normalized query plans execute more than 4 table JOINs per request at high concurrency.

Denormalization techniques include:

- Storing pre-computed aggregate columns (e.g.,

order_totalalongside line items) - Duplicating foreign key lookup data into the primary table to eliminate lookup JOINs

- Creating materialized views that persist JOIN results as physical tables

- Using wide tables in columnar stores (e.g., Amazon Redshift, Google BigQuery) for OLAP workloads

How Do You Design a Database Schema for High Scale

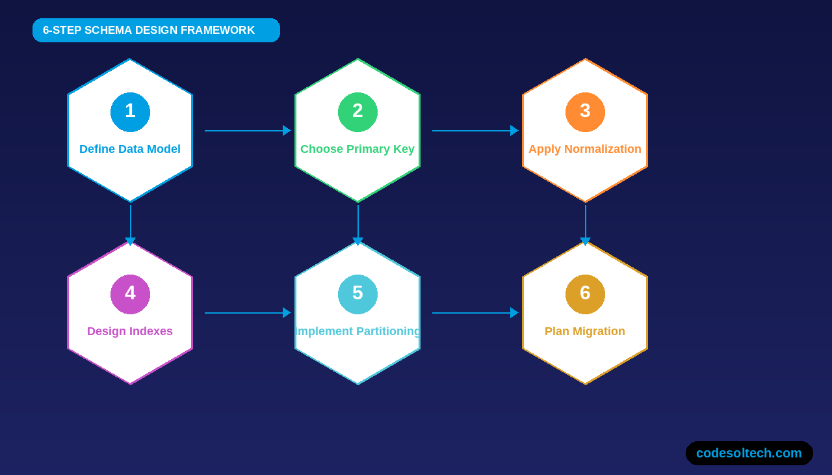

Designing a database schema for large-scale requires 6 foundational decisions made before the first table is created. Each decision constrains or enables specific scaling strategies later in the system’s lifecycle.

1. Define the Data Model Type

The data model determines the schema structure. 4 primary data models exist in production systems:

- Relational model: Tables with rows, columns, and foreign key relationships. Used in SQL databases.

- Document model: Self-contained JSON/BSON documents with embedded sub-documents. Used in MongoDB and Couchbase.

- Wide-column model: Rows identified by a row key with dynamic columns grouped into column families. Used in Cassandra and HBase.

- Graph model: Nodes (entities) and edges (relationships) with properties. Used in Neo4j and Amazon Neptune for highly connected data.

2. Choose Primary Key and Partition Key Strategy

The primary key is the schema’s most performance-critical decision. Sequential integer IDs (auto-increment) create write hotspots on the last B-tree page in distributed SQL databases. UUIDs distribute writes across nodes but consume 16 bytes per row and degrade cache locality.

ULIDs (Universally Unique Lexicographically Sortable Identifiers) combine sortability with uniqueness and eliminate hotspot writes.

In NoSQL wide-column databases like Cassandra, the partition key controls which node stores the row. A poorly chosen partition key creates uneven data distribution (hot partitions), where 1 node handles 80%+ of all write traffic while others remain idle.

3. Apply the Correct Normalization Level

OLTP schemas normalize to 3NF to minimize write amplification and maintain data integrity across thousands of concurrent transactions. OLAP schemas denormalize into star schema or snowflake schema structures to minimize the number of JOINs required by analytical queries that scan billions of rows.

4. Design Indexes for Access Patterns

Every index accelerates reads and degrades writes. An index on a column forces the database engine to maintain a separate ordered data structure (B-tree or LSM-tree) updated on every INSERT, UPDATE, and DELETE. Over-indexing a write-heavy table increases write latency by 30–50% per additional index.

The correct indexing strategy begins with query profiling, not assumption.

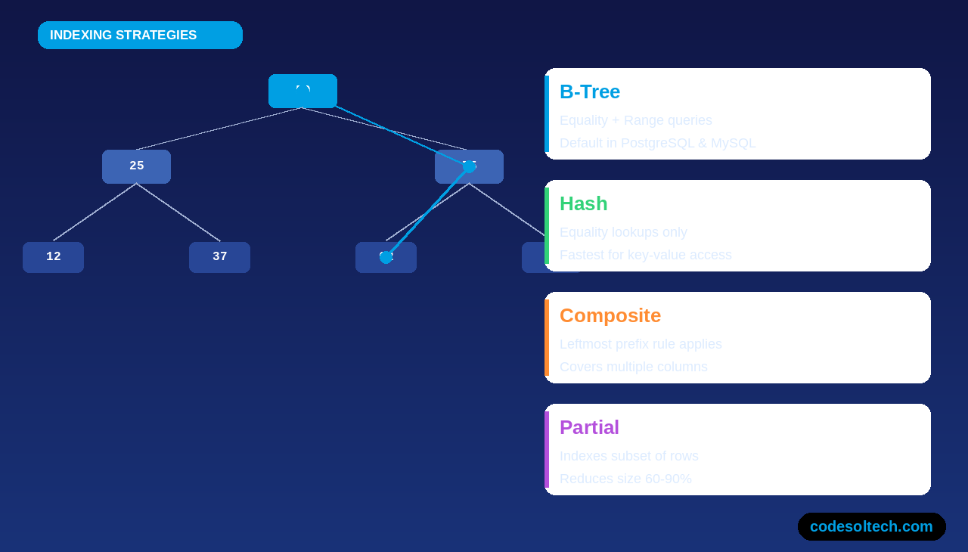

4 index types serve distinct access patterns:

- B-tree index: Supports equality and range queries. Default index type in PostgreSQL, MySQL, and Oracle.

- Hash index: Supports equality lookups only. Faster than B-tree for exact-match key-value retrieval but cannot serve range scans.

- Composite index: Covers multiple columns. Follows the “leftmost prefix rule” — queries must filter on the leading column to use the index.

- Partial index: Indexes a subset of rows matching a predicate. Reduces index size by 60–90% for sparse data distributions.

5. Implement Table Partitioning

Table partitioning divides a large table into smaller, physically separate segments while maintaining a single logical table interface. Partitioning reduces query scan range when the partition key matches the WHERE clause filter — a technique called partition pruning.

3 partitioning strategies exist in SQL databases:

- Range partitioning: Divides rows by a range of values (e.g.,

created_atby month). Optimal for time-series data and log tables. - Hash partitioning: Distributes rows evenly across N partitions using a hash function on the partition key. Eliminates hot partitions for write-heavy workloads.

- List partitioning: Assigns rows to partitions based on a discrete set of values (e.g.,

region = 'US'orregion = 'EU'). Useful for multi-tenant or geographic data isolation.

6. Plan for Schema Migration

Schema migrations alter the structure of a live production database. Destructive migrations — DROP COLUMN, DROP TABLE, or renaming columns — require zero-downtime procedures using the expand-contract pattern:

- Expand: Add the new column or table alongside the existing structure without removing old columns.

- Migrate: Backfill data into the new column using background jobs that process rows in batches of 1,000–10,000.

- Contract: Remove the old column or table only after 100% of application traffic reads from the new structure.

What Is Sharding and How Does It Relate to Schema Design?

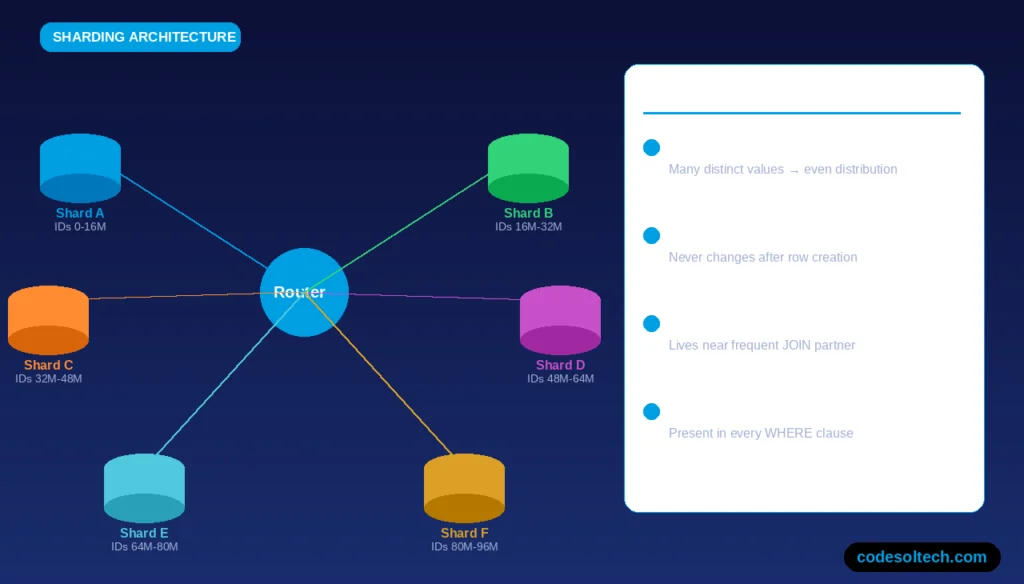

Sharding is a horizontal scaling technique that partitions a database’s rows across multiple independent database instances called shards. Each shard holds a non-overlapping subset of the total data.

Sharding is a schema-level decision because the shard key the column used to route rows to specific shards, must be embedded in every table that participates in cross-shard queries.

An incorrect shard key produces 2 failure modes at scale:

- Hot shards: Uneven data distribution routes 70–90% of queries to a single shard, eliminating the throughput benefit of horizontal scaling.

- Cross-shard JOINs: Queries that JOIN two tables stored on different shards require scatter-gather execution across every shard, increasing latency by 5–20× compared to co-located data.

Correct shard key selection criteria:

- High cardinality (many distinct values) to distribute data evenly

- Immutable after row creation to prevent shard re-routing on UPDATE

- Co-located with the most frequent JOIN partner (e.g., shard by

tenant_idin multi-tenant SaaS systems) - Present in every query’s WHERE clause to enable shard targeting

Star Schema vs Snowflake Schema: Which Is Better for Analytics?

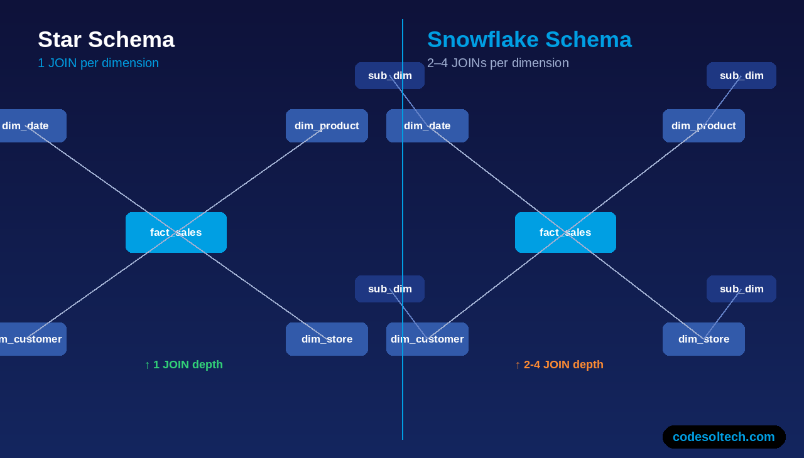

Star schema and snowflake schema are 2 denormalized schema patterns used in data warehouses and OLAP systems. Both replace the normalized 3NF structure with a central fact table surrounded by dimension tables.

Star Schema

A star schema contains 1 central fact table linked directly to fully denormalized dimension tables. Each dimension table holds all attribute hierarchies in a single wide table (e.g., a dim_date table with columns for day, week, month, quarter, and year).

Star schemas reduce JOIN depth to exactly 1 JOIN per dimension, minimizing query complexity for BI tools and report engines.

Snowflake Schema

A snowflake schema normalizes the dimension tables of a star schema into additional sub-dimension tables. A dim_product table in a snowflake schema references a separate dim_category table rather than embedding category attributes. Snowflake schemas reduce storage redundancy by 20–40% compared to star schemas but increase query complexity with additional JOINs.

Comparison Table

| Dimension | Star Schema | Snowflake Schema |

|---|---|---|

| JOIN depth | 1 JOIN per dimension | 2–4 JOINs per dimension |

| Query performance | Faster for aggregations | Slower due to deeper JOIN trees |

| Storage efficiency | Lower (redundancy present) | Higher (normalized dimensions) |

| BI tool compatibility | Excellent (most tools optimized for star) | Moderate |

| Maintenance overhead | Low | Higher (more tables to maintain) |

How Does Indexing Strategy Affect Schema Performance at Scale?

Index strategy is inseparable from schema design. Every table in a production schema requires an explicit index audit before deployment. The audit examines the 3 most frequent query patterns per table: point lookups, range scans, and sort operations.

A covering index stores all columns required by a query inside the index structure itself, eliminating the need to access the base table (a heap fetch). Covering indexes reduce I/O by 70–90% for read-heavy queries but increase index storage size proportionally to the number of included columns.

Index maintenance adds write amplification to every DML operation:

- An INSERT into a table with 5 indexes executes 5 index insertions plus 1 heap insertion — 6 total write operations per row.

- An UPDATE on an indexed column triggers an index delete and an index insert — 2 index operations per affected column per row.

- A DELETE removes the row from the heap and from every index that contains that row’s key.

What Are the Most Common Schema Design Mistakes at Scale?

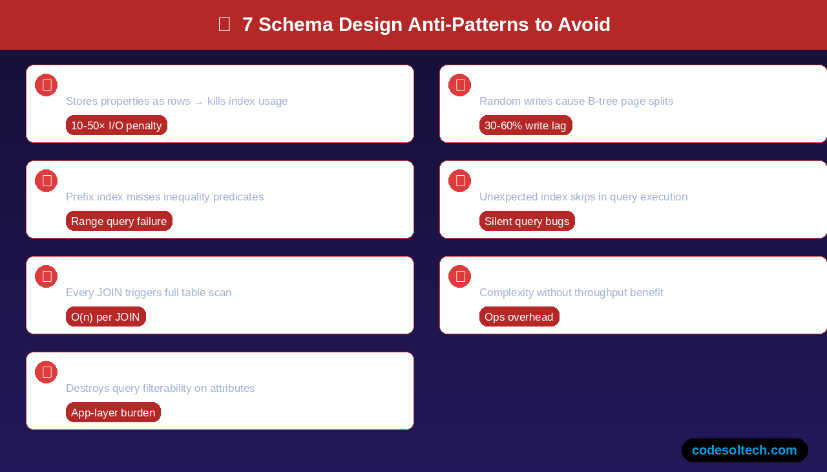

Production schema failures originate from 7 recurring design errors:

- Entity-Attribute-Value (EAV) anti-pattern: Storing entity properties as rows instead of columns creates unpredictable query costs and prevents index utilization. EAV tables with 100 million rows produce query plans with 10–50× the I/O of equivalent normalized schemas.

- Unbounded TEXT columns on indexed tables: Indexing a VARCHAR(MAX) or TEXT column forces the engine to use a prefix index, which misses inequality predicates and degrades range query performance.

- Missing foreign key indexes: Every foreign key column that participates in a JOIN requires its own index. An unindexed foreign key forces a full table scan on every JOIN operation.

- Storing serialized objects in a single column: Packing JSON blobs or serialized arrays into a VARCHAR column moves business logic into the application layer and destroys query filterability on embedded attributes.

- Using GUID/UUID as a clustered index key: Random UUID values destroy the sequential write pattern that B-tree indexes require, causing page splits, index fragmentation, and 30–60% higher write latency on InnoDB and SQL Server.

- Ignoring NULL handling: NULL values in indexed columns require special treatment in every SQL engine. Indexing NULLable columns without understanding the engine’s NULL storage behavior produces unexpected index skips during query execution.

- Premature sharding: Sharding a schema before a single server reaches its write throughput ceiling adds distributed systems complexity without proportional benefit. Engineers should profile and vertically scale first, then shard when writes exceed 80% of single-node capacity.

How Do NoSQL Schemas Handle Scale Differently Than SQL?

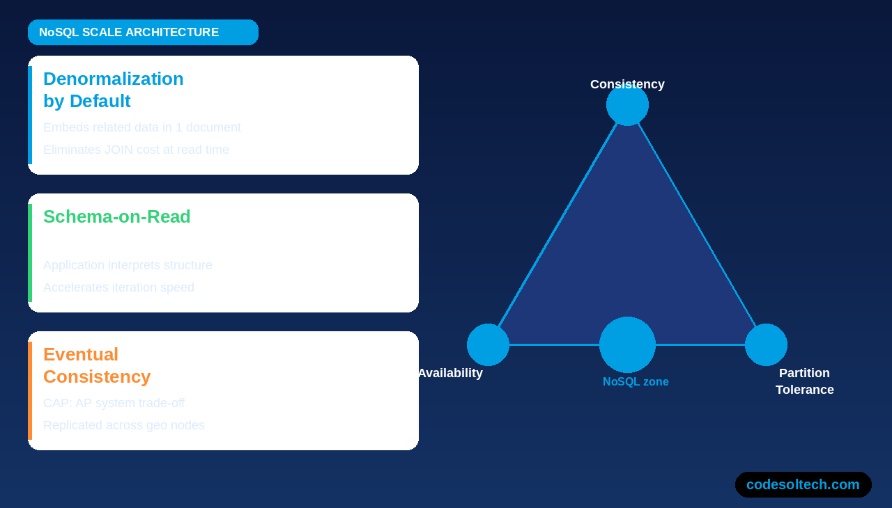

NoSQL schemas achieve horizontal scale by eliminating the relational constraints that limit SQL distribution. 3 architectural properties distinguish NoSQL schema design from relational schema design at scale:

Denormalization by Default

NoSQL document databases (MongoDB, Couchbase) embed related data inside a single document rather than distributing it across normalized tables. A user profile document embeds the user’s 10 most recent orders directly inside the user object, eliminating the JOIN that a relational schema requires.

This design reduces read latency, but increases write amplification when embedded data changes frequently.

Schema-on-Read vs Schema-on-Write

SQL databases enforce schema-on-write: every row inserted must conform to the table’s predefined column definitions and data types.

NoSQL document databases enforce schema-on-read: documents with different structures coexist in the same collection, and the application layer interprets the schema when reading data.

Schema-on-read accelerates iteration speed but transfers data validation responsibility from the database engine to the application code.

Eventual Consistency and the CAP Theorem

The CAP theorem states that a distributed database system provides at most 2 of the following 3 guarantees simultaneously: Consistency, Availability, and Partition tolerance. NoSQL databases choose Availability and Partition tolerance (AP systems), accepting eventual consistency for write operations replicated across geographically distributed nodes.

SQL databases with synchronous replication prioritize Consistency and Partition tolerance (CP systems).

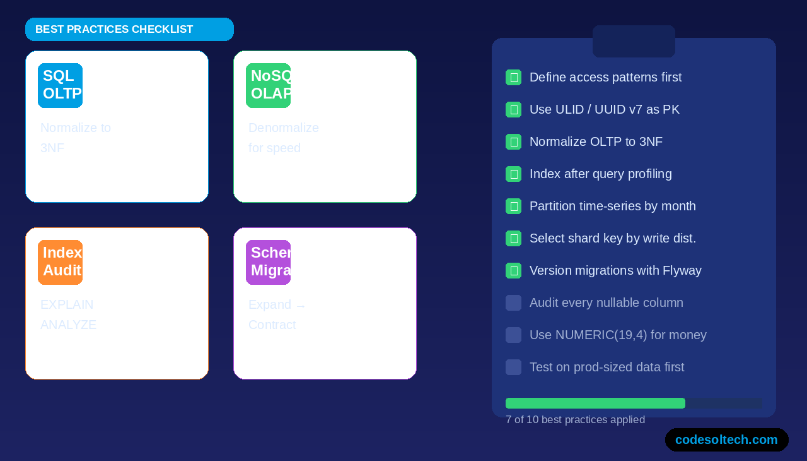

Database Schema Design Best Practices for Scale: A Reference Checklist

- Define all access patterns and query types before creating the first table

- Assign every table a single-column surrogate primary key using ULID or UUID v7 for distributed systems

- Normalize all OLTP schemas to 3NF before evaluating denormalization

- Create indexes only after query profiling identifies missing index candidates using EXPLAIN ANALYZE (PostgreSQL) or EXPLAIN FORMAT=JSON (MySQL)

- Partition time-series tables by month or week from the initial schema design — retrofitting partitioning to a 1-billion-row table requires full table reconstruction

- Select the shard key based on write distribution analysis, not column name intuition

- Version every schema change using a migration tool (Flyway, Liquibase, Alembic, or Atlas) to maintain a reproducible schema history

- Audit every nullable column — replace NULL with a default sentinel value where the application logic treats NULL and a zero-length string identically

- Enforce data type precision: use NUMERIC(19,4) for monetary values, not FLOAT or DOUBLE, to prevent floating-point rounding errors in financial calculations

- Test schema changes against production-sized data volumes in a staging environment before deploying to production

Final Words

Database schema design determines whether a system scales to millions of users or collapses under load.

SQL normalization eliminates anomalies. NoSQL flexibility enables distribution. Indexing, partitioning, and sharding extend capacity horizontally.

Schema decisions made at day one govern system behavior at year five. Design intentionally.

Ready to Architect a Schema That Scales?

Download the free Database Schema Design Checklist a 10-point pre-deployment audit used by senior database engineers to catch scalability gaps before they reach production.