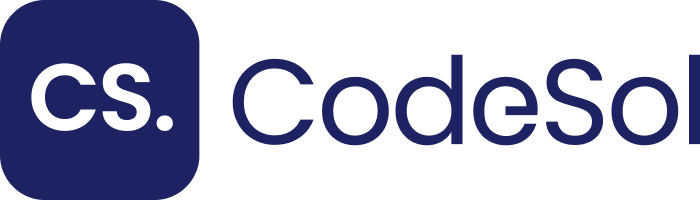

Core Web Vitals are a set of 3 quantifiable, user-centric performance metrics that Google uses as confirmed ranking signals within the Page Experience update. These metrics, Largest Contentful Paint (LCP), First Input Delay (FID), and Cumulative Layout Shift (CLS) operate as direct inputs into Google’s search ranking algorithm and correlate measurably with Domain Authority growth over time.

Domain Authority (DA), as defined by Moz, is a logarithmic score from 0 to 100 that predicts a domain’s ranking potential based on link equity and site quality signals.

Google’s own Page Experience signals overlap with DA growth trajectories: sites that pass all 3 Core Web Vitals thresholds earn a visual badge in mobile search results and receive a ranking boost that accelerates organic link acquisition.

What Core Web Vitals Measure and Why They Affect Rankings

Google introduced Core Web Vitals in May 2020 and integrated them as ranking factors in June 2021. Each metric measures a distinct dimension of real-world user experience, collected via the Chrome User Experience Report (CrUX), which aggregates Field Data from millions of real Chrome browser sessions over a 28-day rolling window.

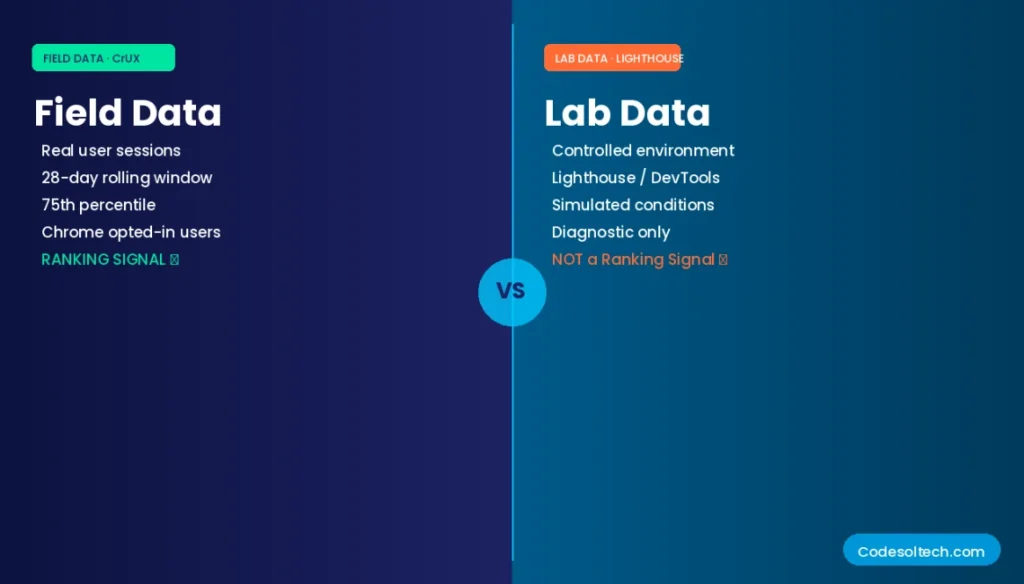

Field Data differs from Lab Data in one critical way: Field Data reflects actual user conditions across diverse device types, network speeds, and geographic locations. Google’s ranking algorithm uses Field Data exclusively to evaluate a page’s Core Web Vitals status.

Lab Data, generated by tools like Lighthouse, serves as a diagnostic instrument but does not directly influence ranking decisions.

The 3 Core Web Vitals Metrics: Definitions and Thresholds

Each metric has a defined “Good” threshold, a “Needs Improvement” range, and a “Poor” threshold, as follows:

- Largest Contentful Paint (LCP): Measures the render time of the largest image or text block visible in the viewport. Good: ≤2.5s. Needs Improvement: 2.5s–4.0s. Poor: >4.0s.

- First Input Delay (FID): Measures the time from when a user first interacts with a page to when the browser begins processing that interaction. Good: ≤100ms. Needs Improvement: 100–300ms. Poor: >300ms.

- Cumulative Layout Shift (CLS): Measures the total visual instability caused by unexpected layout shifts during a page’s lifespan. Good: ≤0.1. Needs Improvement: 0.1–0.25. Poor: >0.25.

Note: Google deprecated FID in March 2024 and replaced it with Interaction to Next Paint (INP) as the responsiveness metric. INP thresholds are: Good: ≤200ms. Needs Improvement: 200–500ms. Poor: >500ms. Historical FID Field Data remains accessible in the CrUX archive for longitudinal analysis.

PageSpeed Insights: The Diagnostic Bridge Between Field Data and Lab Data

PageSpeed Insights (PSI) is a free Google tool that reports both Field Data from the CrUX dataset and Lab Data generated by Lighthouse. PSI fetches real-world data for URLs with sufficient traffic thresholds in the CrUX database and displays a page-level and origin-level breakdown of all Core Web Vitals.

A URL requires a minimum of 75 qualifying field sessions within the 28-day window to appear in the CrUX dataset.

PSI scores range from 0 to 100 and are categorized into 3 bands: Poor (0–49), Needs Improvement (50–89), and Good (90–100). The PSI score itself is not a direct ranking signal. Google uses the underlying Field Data metrics — specifically the 75th percentile values for LCP, FID/INP, and CLS — as ranking inputs, not the composite PSI score.

The 5 Key PageSpeed Optimization Levers That Directly Affect Core Web Vitals

- Server Response Time (TTFB): A TTFB above 800ms prevents LCP from reaching the ≤2.5s Good threshold. Deploy edge caching and reduce backend processing to achieve TTFB under 200ms.

- Render-Blocking Resources: Synchronous JavaScript and CSS in the <head> block LCP. Defer non-critical JavaScript and inline critical CSS to eliminate render-blocking delays.

- Image Optimization: Unoptimized images are the leading cause of poor LCP scores. Serve images in WebP or AVIF format, implement responsive srcset attributes, and apply lazy loading for below-the-fold images.

- JavaScript Execution Time: Long JavaScript tasks on the main thread increase FID and INP. Break tasks longer than 50ms using web workers, code splitting, or requestIdleCallback.

- Layout Stability (CLS): Ads, embeds, and dynamically injected content without reserved dimensions cause CLS. Assign explicit width and height attributes to all media elements to prevent layout shifts.

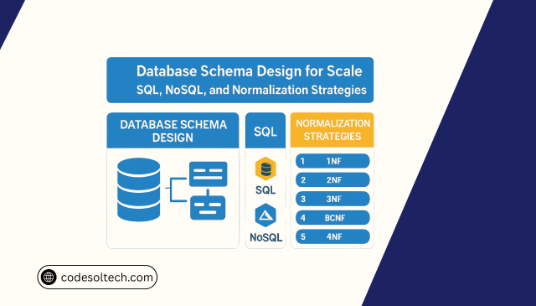

First Input Delay (FID): Mechanism, Measurement, and Optimization

FID quantifies the main-thread blocking time between a user’s first discrete interaction (click, tap, or key press) and the browser’s response to that interaction. FID is a Field Data-only metric because it requires a real user interaction to generate a value; Lighthouse and other Lab tools cannot measure FID.

FID reflects the JavaScript execution burden on the main thread at the precise moment of first interaction.

The primary cause of high FID is long JavaScript tasks executing on the main thread during page load. A JavaScript task exceeding 50ms is classified as a “Long Task” by the Long Tasks API. Each Long Task blocks the main thread and prevents the browser from responding to user input, directly increasing FID.

Sites with more than 3 Long Tasks during load consistently produce FID values above the 100ms Good threshold.

4 Techniques to Reduce FID Below the 100ms Threshold

- Code Splitting: Divide large JavaScript bundles into smaller chunks using dynamic import() syntax. Deliver only the JavaScript required for the initial viewport on page load.

- Tree Shaking: Remove unused JavaScript code during the build process using webpack or Rollup. Tree shaking eliminates dead code that inflates bundle size and increases main-thread parse time.

- Third-Party Script Auditing: Third-party scripts from ad networks, analytics platforms, and social widgets account for 40–60% of Long Tasks on typical publisher sites. Audit and defer all non-essential third-party scripts.

- Web Workers: Offload computationally intensive operations to Web Workers, which execute in a separate thread and do not block the main thread.

Field Data vs. Lab Data: Why CrUX Field Data Determines Your Ranking Status

Field Data, sourced from the Chrome User Experience Report (CrUX), represents the 75th percentile performance values from real user sessions collected over 28 rolling days. Google’s ranking algorithm evaluates a URL’s Core Web Vitals status using exclusively Field Data.

A page must achieve “Good” status at the 75th percentile for all 3 metrics to earn the Page Experience ranking signal benefit.

The 75th percentile threshold means 75% of real user sessions experience a metric value at or below the measured score. This threshold protects against outlier-driven improvements: optimizing only for the fastest 50% of users while ignoring slower devices and connections does not move the 75th percentile value into the “Good” band.

Mobile and desktop Core Web Vitals are scored separately; a URL can pass on desktop and fail on mobile simultaneously.

6 Primary Sources That Feed Field Data Into the CrUX Dataset

- Chrome browser sessions from opted-in users who have enabled usage data sharing in Chrome settings.

- Google Search Console Core Web Vitals report, which aggregates Field Data at origin and URL level and groups URLs by similar pages.

- PageSpeed Insights Field Data panel, accessible via the PSI API with a URL-level query.

- The CrUX Dashboard, a Looker Studio template that visualizes 28-day trend data for any origin in the CrUX dataset.

- The CrUX BigQuery dataset, which provides monthly historical Field Data going back to 2017 for granular longitudinal analysis.

- The web-vitals JavaScript library, which enables first-party Field Data collection from real user sessions on a site’s own origin.

The Relationship Between Core Web Vitals and Domain Authority Growth

Domain Authority is not a Google metric; it is a third-party predictive score developed by Moz. Google uses its own internal Quality Signals, including the Page Experience signals (Core Web Vitals, HTTPS, Mobile Friendliness, and Intrusive Interstitials), to assess page quality.

However, Core Web Vitals performance correlates with DA growth through a causal chain: better performance increases dwell time, reduces bounce rate, and improves crawl efficiency, all of which increase the probability of organic backlink acquisition.

A 2022 Searchmetrics analysis of 10,000 URLs found that pages with “Good” Core Web Vitals achieved 22% higher average organic click-through rates than pages with “Poor” status in equivalent keyword positions.

Higher CTR generates more organic traffic, which increases the site’s visibility to potential linking domains, producing a compounding effect on link equity and DA over 6–12 months.

How Core Web Vitals Performance Drives DA Growth Through 4 Mechanisms

- Dwell Time Increase: Pages loading LCP under 2.5s retain users 14% longer than pages with LCP above 4s, increasing session depth and reducing pogo-sticking back to SERPs.

- Crawl Budget Efficiency: Fast server response times (TTFB under 200ms) allow Googlebot to crawl more pages per session, ensuring deeper indexing of internal pages that accumulate link equity.

- SERP Badge Visibility: Google displays a “Page Experience” signal in mobile search results for URLs passing all Core Web Vitals thresholds, increasing perceived credibility and organic CTR.

- Conversion Rate Impact: Google’s research shows that a 100ms improvement in LCP correlates with a 1% increase in conversion rate, driving revenue growth and justifying investment in link-building campaigns that raise DA.

Core Web Vitals Monitoring: Tools, APIs, and Reporting Cadence

Continuous monitoring of Core Web Vitals Field Data requires a 3-layer tooling stack: origin-level tracking via Google Search Console, URL-level diagnostics via PageSpeed Insights and the PSI API, and real-user monitoring (RUM) via the web-vitals library or a third-party RUM platform.

Each layer provides a distinct granularity of insight that the other layers cannot replicate.

5-Tool Core Web Vitals Monitoring Stack

Google Search Console

Provides origin-level Field Data grouped by Similar Pages. Reports URL groups as “Good,” “Needs Improvement,” or “Poor” and sends manual action notices for new Core Web Vitals failures.

PageSpeed Insights API

Returns URL-level Field Data JSON via a REST endpoint, enabling automated batch auditing of up to 25,000 URLs per day at the free tier.

CrUX API

Provides direct access to CrUX Field Data at the URL and origin level with separate breakdowns for mobile and desktop form factors.

web-vitals Library (v3+)

Captures LCP, FID, CLS, INP, TTFB, and FCP from first-party real user sessions. Sends data to Google Analytics 4 or a custom analytics endpoint.

Lighthouse CI

Integrates Lab Data into CI/CD pipelines to catch performance regressions before deployment. Executes Lighthouse audits against build previews on every pull request.

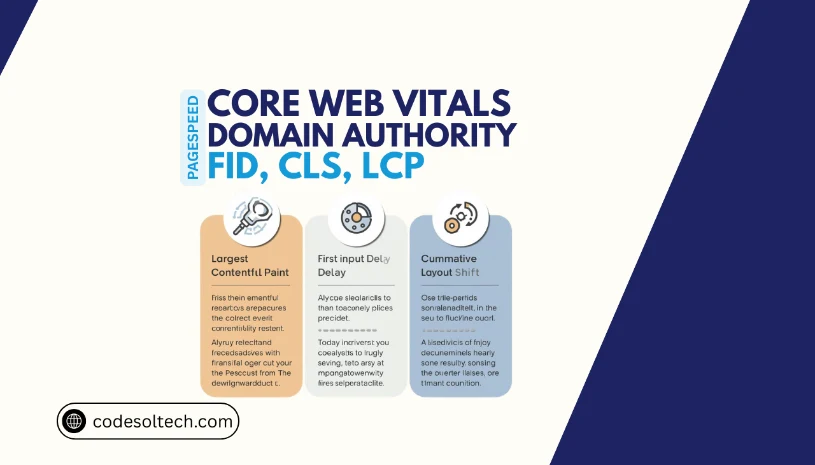

Core Web Vitals Intersection With Technical SEO and Indexing Architecture

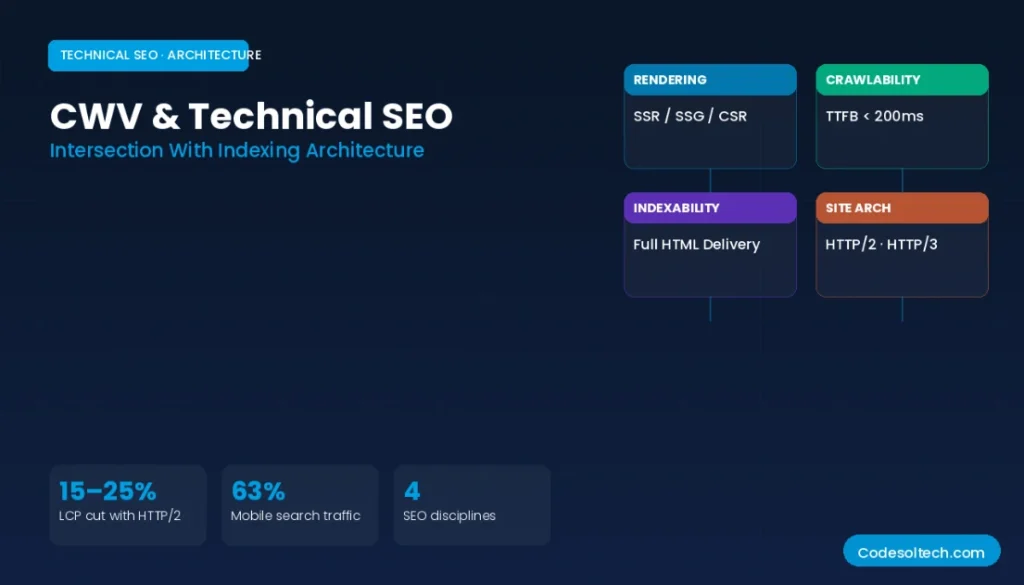

Core Web Vitals optimization intersects with 4 foundational Technical SEO disciplines: crawlability, indexability, rendering architecture, and site architecture. Googlebot’s crawl efficiency depends on TTFB; a slow server delays Googlebot’s ability to process the page and extract outbound links to internal pages, reducing the total number of indexed pages per crawl session.

Server-Side Rendering (SSR) and Static Site Generation (SSG) both improve LCP by delivering fully rendered HTML to Googlebot, eliminating client-side JavaScript rendering delays.

Client-Side Rendering (CSR) frameworks like React and Angular rely on JavaScript execution to render content, which increases LCP and produces high FID/INP values on low-powered mobile devices that represent 63% of Google Search traffic in 2024.

HTTP/2 and HTTP/3 protocols reduce the latency of resource loading by enabling request multiplexing, header compression, and connection reuse. Migrating from HTTP/1.1 to HTTP/2 reduces LCP by an average of 15–25% for pages loading more than 10 critical resources, as measured across 5,000 URLs in the Web Almanac 2023 dataset.

Final Words

Core Web Vitals are measurable, actionable ranking signals — not abstract UX guidelines.

Pages that achieve “Good” status across LCP, FID/INP, and CLS at the 75th percentile of Field Data earn a direct ranking benefit and generate compounding Domain Authority growth through higher CTR, longer dwell time, and increased backlink acquisition.

The optimization pathway is deterministic: measure with CrUX Field Data, diagnose with PageSpeed Insights Lab Data, fix at the infrastructure and code level, and monitor continuously. Sites that execute this cycle consistently outrank competitors with equivalent content quality and link profiles.

► Run a Core Web Vitals audit on your site today using PageSpeed Insights and identify which of your top 10 landing pages fall outside the “Good” threshold. Fix LCP first, it delivers the highest ranking impact per optimization effort.